- unwind ai

- Posts

- GPT 5.5 & Kimi K2.6 Built for Autonomous AI Agents

GPT 5.5 & Kimi K2.6 Built for Autonomous AI Agents

+ Google Agent CLI to build and deploy ADK agents

Today’s top AI Highlights:

& so much more!

Read time: 3 mins

AI Tutorial

I’ve been running a bunch of agents every day for months.

The problem is that I was the one constantly tweaking and learning while they just held onto context.

I tested it out by putting the same Monica agent on Hermes at the same time.

She started creating her own playbook from my edits and keeps getting better without me.

In this blog, I detailed how I did it. You’ll also understand the difference between an agent you manage and one that actually grows with you.

We share hands-on tutorials like this every week, designed to help you stay ahead in the world of AI. If you're serious about leveling up your AI skills and staying ahead of the curve, subscribe now and be the first to access our latest tutorials.

Latest Developments

There's a weird tension at the heart of every long-running agent: keep everything in context and watch quality rot, or prune and lose important things.

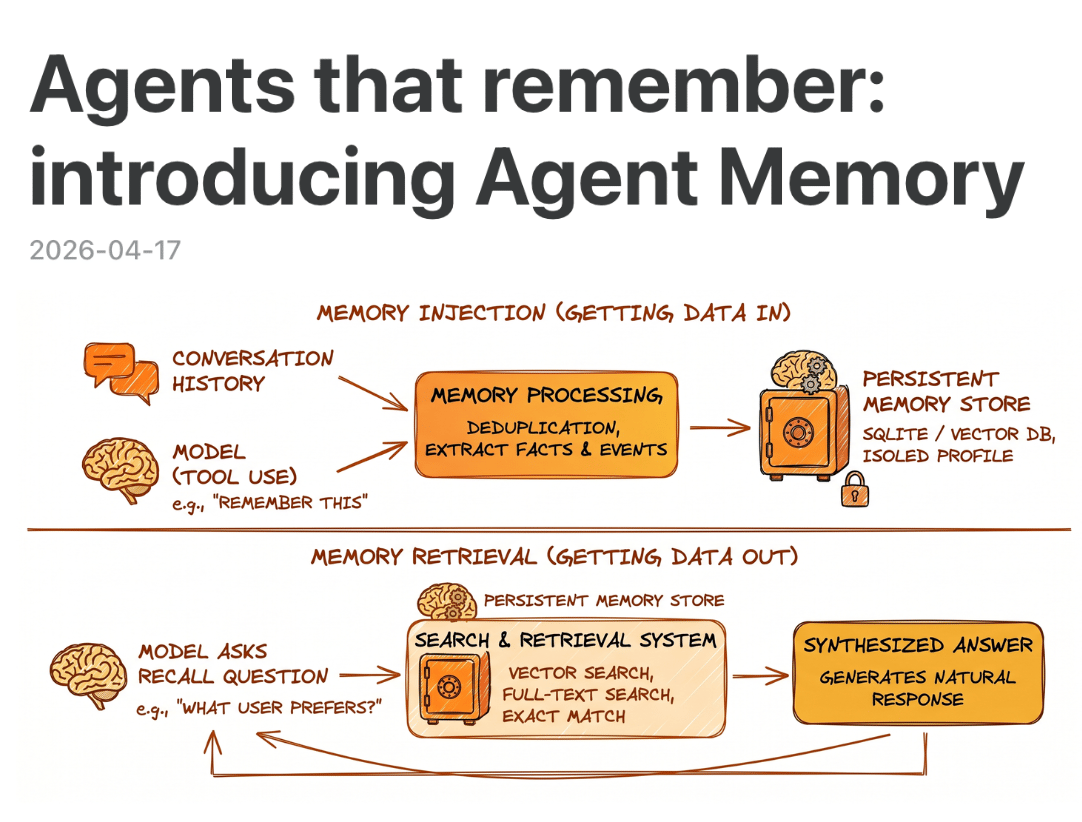

Cloudflare's new Agent Memory, out in private beta, picks a third option - extract the important stuff from conversations at compaction time and make it retrievable on demand. The API is deliberately small: ingest a conversation, remember something specific, recall what you need, list, or forget.

Unlike local-first setups like OpenClaw where memory is md files the model writes itself, this is a managed architecture built for memory at scale, where ingestion quality and retrieval sophistication actually matter. You can call it from a Worker via binding or hit the REST API from anywhere, and it plugs directly into the Cloudflare Agents SDK.

Key Highlights:

What sticks, what fades - Memories get sorted into facts, events, instructions, and tasks. Facts update in place instead of piling up, and tasks are ephemeral by design.

Retrieval that actually holds up - Five search channels run in parallel and get fused together, so queries work whether you phrase them as keywords or questions.

Smaller models often win - Llama 4 Scout handles the structured work, Nemotron 3 does synthesis. Bigger wasn't better for most stages.

Already running inside Cloudflare - It’s already powering as an OpenCode plugin with shared team memory, an agentic code reviewer, and an internal chatbot. Early access is open via waitlist if you're building agents on Cloudflare.

Mistral Vibe is a terminal-native coding agent powered by Mistral's models that lets you explore, modify, and interact with your codebase through natural language. The latest update adds voice mode: toggle it on to give instructions by voice instead of typing, and enable text-to-speech to have Vibe read its output back to you.

Key highlights:

Voice input - Toggle voice mode on with /voice to talk to Vibe instead of typing.

Text-to-speech readouts - Vibe can read its output back to you, keeping you in flow without having to read through long responses.

Chat rewind - /rewind lets you navigate back through your conversation and fork from any point. Useful for exploring a different approach without starting a new session.

Parallel tool execution - Vibe now reads multiple files, runs searches, and calls several sub-agents simultaneously to speed up sessions.

Session resume - Pick up any previous session with /resume and full context intact. Or launch with vibe --continue to jump straight back into your last session.

This open-source model ran autonomously for 5 days straight, managing incidents, running code, handling system ops with zero human babysitting.

In another run, it spent 13 hours rewriting an 8-year-old financial matching engine, made 1,000+ tool calls, and more than doubled throughput.

That’s Kimi K2.6 by you for by Moonshot AI, a 1T MoE model (32B active) built for long-horizon coding, proactive 24/7 agents, and multi-agent orchestration.

The benchmarks hold up: 58.6 on SWE-Bench Pro, 80.2 on SWE-Bench Verified, 66.7 on Terminal-Bench 2.0, and 54.0 on HLE with tools, sitting alongside Claude Opus 4.6 and GPT-5.4 while being fully open-weight.

Beyond text and code, Moonshot has clearly leaned hard into design this time — K2.6 turns a single prompt into Awwwards-level frontends with hero sections, scroll animations, and even generated image/video assets.

Key Highlights:

Agent Swarm baked into the model - K2.6 can decompose one prompt into up to 300 specialist sub-agents running 1000s of coordinated steps in parallel, with the model itself acting as orchestrator.

Use with OpenClaw and Hermes - K2.6 is explicitly tuned to power proactive always-on agent frameworks like OpenClaw and Hermes. Definitely worth trying if you’re still struggling with the models.

Claw Groups for team-style multi-agent work - A research preview where you and your teammates can bring your own agents, running on different devices, using different models, with their own tools and memory, into one shared workspace. K2.6 sits at the center as coordinator, assigning work to whichever agent is best suited and reassigning tasks if one fails.

The model is OpenAI/Anthropic-API compatible and available now via kimi.com, the Moonshot API, Kimi Code CLI, and Hugging Face.

Quick Bites

Codex goes from coding agent to desktop Copilot

The new Codex takes its "(almost) everything" tagline pretty seriously: background computer use lets multiple agents click around your Mac without stepping on your own work, a native browser turns frontend iteration into a point-and-annotate loop instead of a prompt-and-hope one. Add image generation inside the agent loop, a memory preview, and cross-session automations that resume tasks across days, and OpenAI is quietly reshaping Codex into a desktop control surface rather than a coding sidekick. Rolling out now to Codex desktop app users.

Email inbox for your AI agents

Every agent can now have their own inbox with Cloudflare's Email Service in public beta. Besides just inbox, it enables a bunch of features like asynchronous work, persistent state across replies, and a channel users already know how to use. It's a neat counterpoint to the default assumption that agents need bespoke chat interfaces. Also shipping: an Email MCP server, CLI tooling, and an open-source Agentic Inbox you can deploy in one click.

OpenAI drops its new frontier model GPT 5.5

OpenAI shipped GPT-5.5 today (codename "Spud"), six weeks after 5.4 and one week after Opus 4.7. Interesting to see the release cadence now measured in "days since last frontier model." 😅 It takes the top spot on Terminal-Bench 2.0 (82.7%), FrontierMath Tier 4 (35.4%), and long-context retrieval at 1M tokens, while Opus 4.7 hangs onto SWE-Bench Pro and MCP Atlas. API access is "coming very soon" at $5/$30 per million tokens, with the Pro variant at a spicy $30/$180.

OpenAI and Google shipped their enterprise agent platforms on the same day

Google's Gemini Enterprise Agent Platform absorbs Vertex AI. All future roadmap ships through Agent Platform, which bundles Agent Studio (low-code), an upgraded ADK, and a proper governance stack: Agent Identity, Registry, Gateway, plus runtime that supports multi-day workflows with persistent Memory Bank. It gives a single platform to build agents, scale to production, establish centralized control, and optimize with full traces.

A few hours later, OpenAI released Workspace Agents in ChatGPT. These are Codex-powered team agents that keep running while you're offline, plug into Slack, Drive, Salesforce, and Notion, and can be scheduled or triggered by events. Build one in the sidebar by describing a workflow in plain English, then let it handle month-end close or inbound lead routing while your team does literally anything else. Free until May 6, after which credit-based pricing kicks in.

Tools of the Trade

Agents CLI in Agent Platform: Google released an open-source CLI that gives AI agents like Claude Code, Cursor, and Gemini CLI a machine-readable path into Google Cloud's agent stack. Describe the agent in a simple prompt, and they use CLI + the bundled skills to scaffold an ADK project, run evals, and deploy to Agent Runtime, Cloud Run, or GKE.

Mercury: Open-source CLI and Telegram AI agent that asks before it acts, like shell blocklists, folder-scoped file access, and explicit approval for sensitive commands. Personality lives in

soul.mdandpersona.md, and daily token budgets keep it from burning through your API credits.Kimi Vendor Verifier: Moonshot’s open-source toolkit that checks whether third-party inference providers are actually running Kimi K2 correctly. It runs six benchmarks to separate real model issues from sloppy engineering at the vendor layer.

Cua Driver: An open-source macOS driver that lets any agent like Claude Code, Codex, or your own click and type inside real Mac apps in the background, without stealing your cursor or pulling focus across Spaces. It works by tapping into a few of Apple's private, undocumented system APIs to deliver input directly to a target app, so the app responds while your foreground window keeps everything it had.

Awesome LLM Apps - A curated collection of LLM apps with RAG, AI Agents, multi-agent teams, MCP, voice agents, and more. The apps use models from OpenAI, Anthropic, Google, and open-source models like DeepSeek, Qwen, and Llama that you can run locally on your computer.

(Now accepting GitHub sponsorships)

That’s all for today! See you tomorrow with more such AI-filled content.

Don’t forget to share this newsletter on your social channels and tag Unwind AI to support us!

PS: We curate this AI newsletter every day for FREE, your support is what keeps us going. If you find value in what you read, share it with at least one, two (or 20) of your friends 😉

Reply