- unwind ai

- Posts

- Talking to AI Agents is All You Need

Talking to AI Agents is All You Need

4 Simple Habits to Get 10× Better Results from AI Agents

You've tried Claude Code. Cursor. Antigravity.

The demos looked great, but the results feel mediocre. You're not missing a framework. You're not missing a prompt library. You're missing a skill.

The skill is talking to agents. Not prompting. Talking. Communicating clearly enough that they build what you actually need.

The agent is a mirror. Vague input, vague output. Clear input, clear output. No trick changes that.

What I learned the hard way

My early prompts weren't terrible. They just weren't good enough.

"Build a customer feedback analyzer. It should pull from Google Docs, Slack, and Slides using their APIs. Aggregate everything into one view and surface the main themes. Use Python and Streamlit for the UI."

Sounds reasonable. Has detail. Mentions the tech stack.

I'd get something back, but the connectors would break in weird ways. After a few iterations I'd have something that technically worked. But the output was useless. Themes like "product" and "feedback" and "issue." No insight a human couldn't have guessed in ten seconds.

It took me embarrassingly long to realize the problem wasn't the agent.

Now when I need the same thing, I don't start with the architecture. I start with the situation.

"I'm building for a PM who spends three hours every Monday copying feedback from Slack, Docs, and Slides into a spreadsheet. He's looking for patterns but drowning in noise. A useful insight looks like 'users mentioned onboarding friction 40% more this week than last, mostly in Slack.' A useless insight looks like 'common themes: product, feedback, issues.' Build something that surfaces the first kind, not the second."

Same task. Completely different input. The agent now knows what success looks like.

The difference isn't prompting technique. It's that I actually thought through what the output needed to do before I asked for it.

If you want to go deeper on how to structure and curate context for agents, start there. I wrote about this more in Context is the New Moat.

What actually works for me

After building hundreds of agents, I've noticed four habits that separate effective communication from the rest.

1. Lead with why, not what. Don't start with the task. Start with the problem.

"Build a dashboard" is what. "Our users can't spot trends in 500 daily feedback messages" is why.

The why shapes every decision the agent makes downstream. When you skip it, the agent guesses your intent. It guesses wrong. Then you blame the output when the input was the problem.

2. Show, don't describe. Examples beat descriptions ten to one.

"Make it clean and professional" means nothing. Showing three examples of what you consider clean and professional means everything.

repo, I don't describe what a good README looks like. I show the agent three READMEs of the most popular agent projects and say "like these." The output matches because the input was specific. When I build something for the Awesome LLM Apps.

3. Constraints that actually matter. Not every constraint. The ones that will change what gets built.

Bad: listing fifteen constraints including obvious ones like "should work correctly."

Good: "Has to work with free-tier API limits" or "Users will abandon if setup takes more than five minutes."

The right constraints narrow the solution space to where good answers live. The wrong constraints are noise.

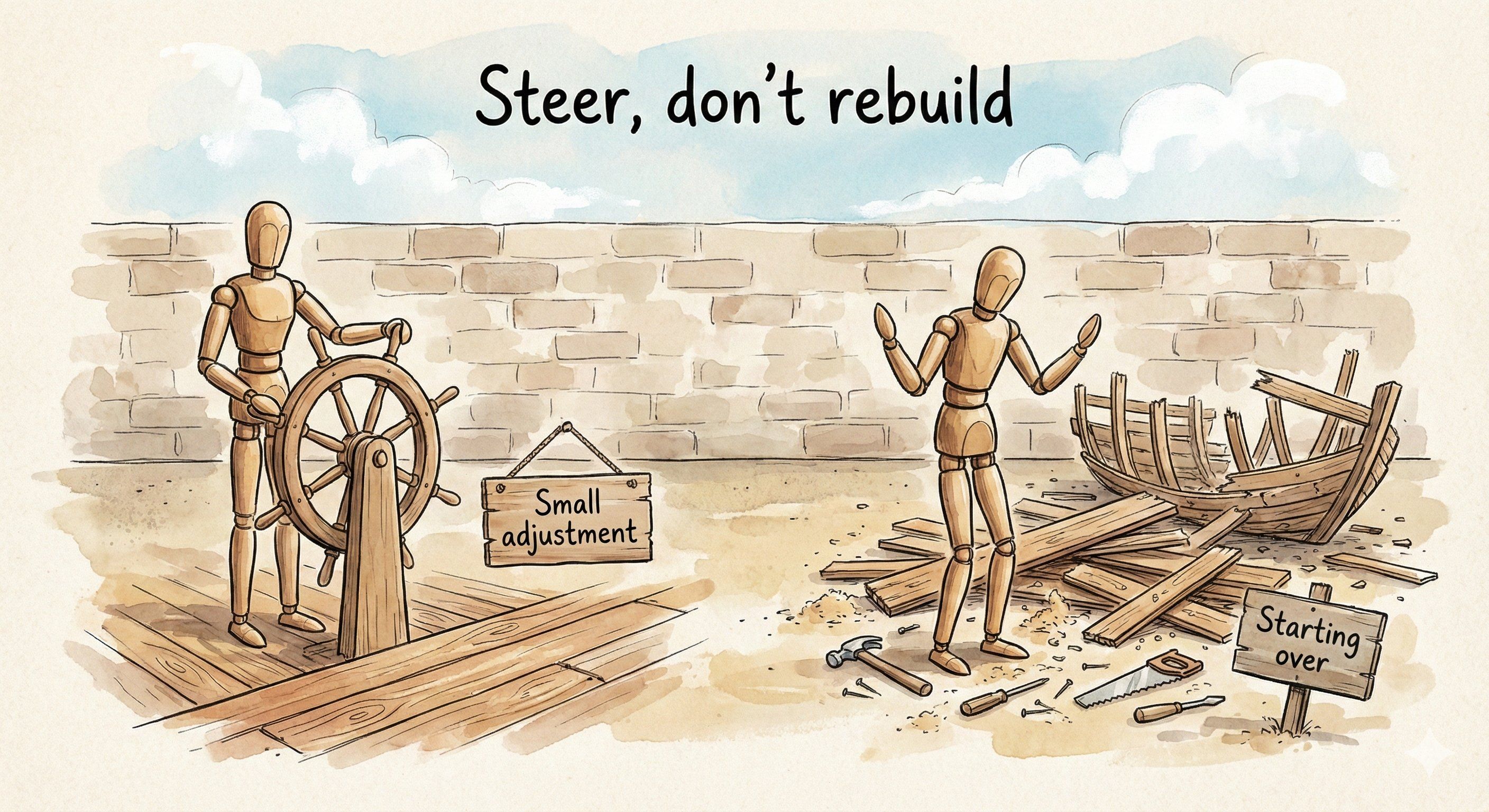

4. React, don't rewrite. When output is wrong, don't start over with a new prompt.

React to what you see. "That's close, but the tone is too formal." "This misses the edge case where users have no data yet." "The structure is right but the examples feel generic."

Conversation beats one-shots. Most people rewrite entire prompts when a single sentence of feedback would fix it. I rarely write more than two sentences when iterating. The context is already there. I just steer the agent.

This is also how you stay in flow. I wrote in "Vibe Coding" about staying in problem-space instead of dropping into implementation. Same principle here. Rewriting a prompt from scratch pulls you out of the problem-space. Reacting keeps you in it.

Agents amplify what you communicate

Here's what nobody tells you about working with agents daily.

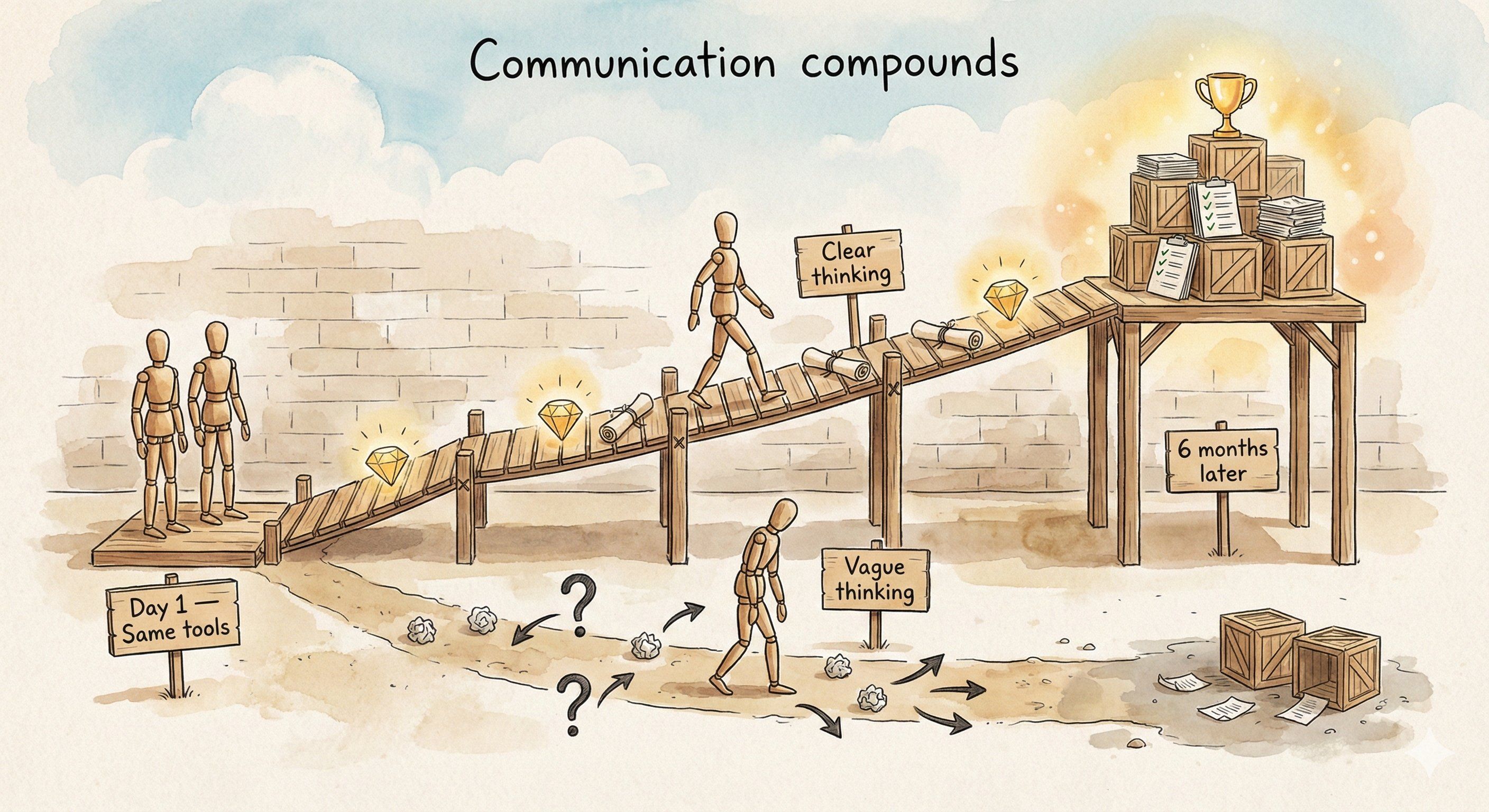

Agents expose your communication weaknesses instantly.

", I wrote about how that translation layer is compressing. This is the other side of that

the old world, you could be a vague thinker and hide it. You'd write a fuzzy spec, engineers would ask clarifying questions over days, and the translation process would paper over your lack of clarity. In The Modern AI PM, agents don't ask clarifying questions. They take your vague input and produce vague output immediately. There's nowhere to hide.

This is uncomfortable. But it's also useful.

Because the inverse is true too. Clear thinking produces clear output at speed.

I've watched people spend hours wrestling with agent output, getting frustrated, concluding that agents are overhyped. Meanwhile someone else ships the same thing in twenty minutes. Same model. Same task. The difference is always upstream.

After a few months of working this way, some people ship 10x more than others using the same tools.

Not because they're smarter. Because their communication compounds.

Building the skill

There's no template that makes you good at this. Just reps.

Before you prompt, try the "new hire" test. Could you explain this task to a brilliant person on their first day who knows nothing about your situation? If you can't, your thinking isn't clear enough yet.

When output misses the mark, don't blame the agent. Ask: what did I fail to communicate? That's where the learning is.

Pick one habit. Practice it for a week.

Lead with why. Show examples. State real constraints. React instead of rewriting.

Here's an exercise that helped me. Take your last failed agent interaction. Don't look at the output. Look at your input. What did you assume the agent knew? What context did you skip? What did "good" look like in your head that you never said out loud?

Write that down. That's your gap.

The people searching for the perfect prompt are solving the wrong problem. Prompts are just the residue of clear thinking. Get clearer and your prompts improve automatically.

The agent is a mirror. What it reflects is up to you.

That's the real skill. Not talking to agents. Thinking clearly enough that talking becomes easy.

We share in-depth blogs and tutorials like this 2-3 times a week, to help you stay ahead in the world of AI. If you're serious about leveling up your AI skills and staying ahead of the curve, subscribe now and be the first to access our latest tutorials.

Reply