- unwind ai

- Posts

- Claude Mythos Too Powerful to be Released (AGI for Coding)

Claude Mythos Too Powerful to be Released (AGI for Coding)

+ Karpathy’s knowledge base builder as a Skill

Today’s top AI Highlights:

& so much more!

Read time: 3 mins

AI Tutorial

Six AI agents run my entire life while I sleep.

Not a demo. Not a weekend project.

A real team that works 24/7, making sure I'm never behind. Research done. Content drafted. Code reviewed. Newsletter ready. By the time I open Telegram in the morning, they've already put in a full shift.

By the end of this, you will understand exactly how to build an autonomous AI agent team that runs while you sleep.

We share hands-on tutorials like this every week, designed to help you stay ahead in the world of AI. If you're serious about leveling up your AI skills and staying ahead of the curve, subscribe now and be the first to access our latest tutorials.

Latest Developments

Every frontier lab races to ship its most powerful model. Anthropic did the opposite.

Claude Mythos Preview, the company's strongest model by a mile, won't be available to the public. Its ability to find and exploit software vulnerabilities is so strong that Anthropic is treating it as a security event, not a product launch.

Under Project Glasswing, a coalition including AWS, Apple, Google, Microsoft, NVIDIA, and JPMorganChase gets exclusive access to use Mythos for defensive cybersecurity work. The model has already uncovered thousands of zero-days in every major OS and browser, some hidden for decades.

The most capable AI model in the world exists right now. And the fact that you can't use it might be the most important thing about it.

Key Highlights:

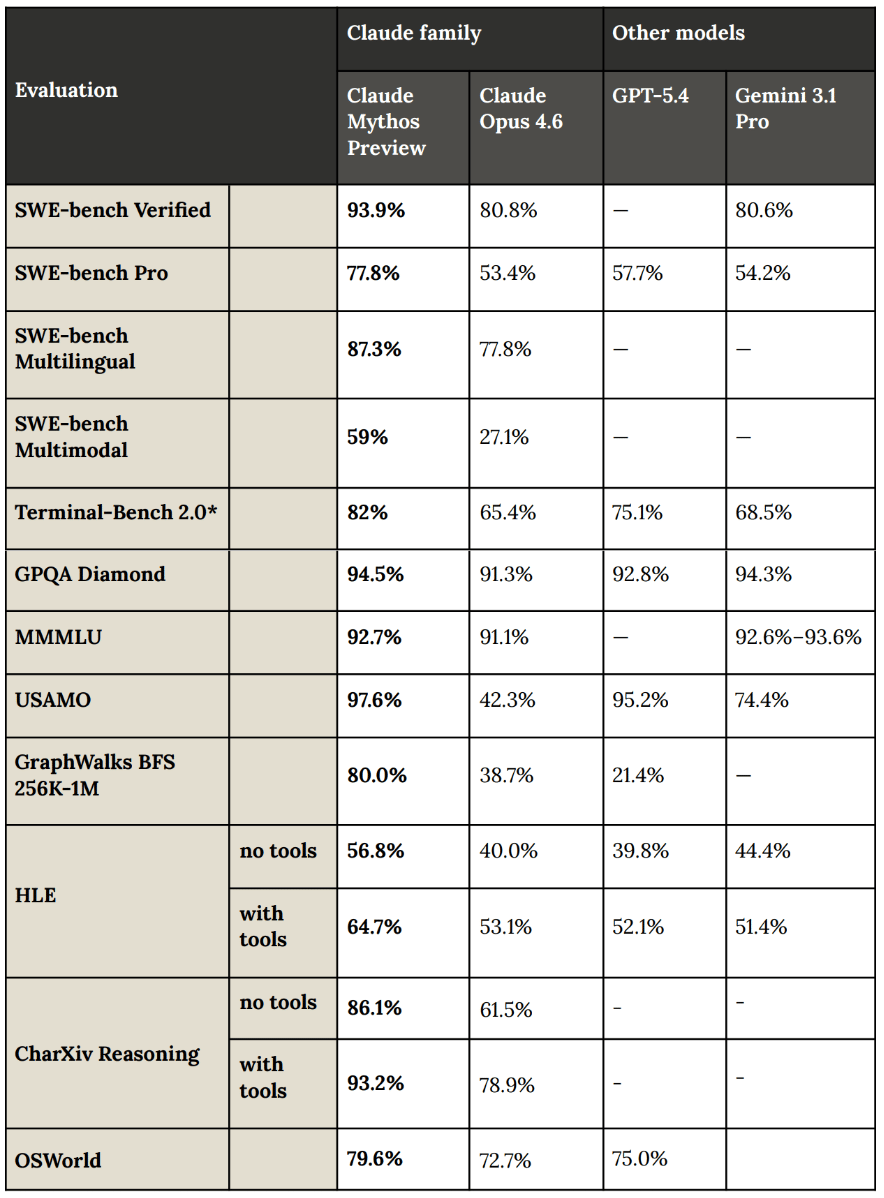

Performance gap is massive: Mythos scores 93.9% on SWE-bench Verified vs. Opus 4.6's 80.8%, and on SWE-bench Pro, the gap is even wider: 77.8% vs. 53.4%.

Real-world finds: A 27-year-old OpenBSD vulnerability, an FFmpeg bug that dodged 5 million automated tests, and a fully autonomous Linux kernel privilege escalation chain - all found without human guidance.

Gated rollout: Around 40 additional organizations beyond the launch partners can access Mythos for scanning critical infrastructure. Open-source maintainers can apply via Claude for Open Source.

Safeguards before scale: Anthropic's plan is to develop and ship new cybersecurity safeguards with an upcoming Opus model before bringing Mythos-class capabilities to broader users. A Cyber Verification Program is coming for security professionals.

Read the full Project Glasswing announcement here.

Andrej Karpathy posted his LLM Knowledge Bases workflow just last week, and someone already shipped a Skill he described.

Graphify is an open-source Claude Code skill that takes any folder with code, PDFs, images, markdown, and compiles it into a navigable knowledge graph with a single command.

Type /graphify in Claude Code, point it at a directory, and it produces an interactive graph visualization, a backlinked Obsidian vault, Wikipedia-style wiki articles, and a structured report highlighting the most connected concepts and surprising cross-references.

It supports code in 13 programming languages, extracts from PDFs, and even processes images via Claude's vision capabilities.

The open-source shipping speed is insane!

Google Cloud just open-sourced a multi-agent orchestration framework. It lets Claude Code, Gemini CLI, and Codex all work on your codebase at the same time.

Scion is an experimental testbed that spins up each agent in its own container with separate credentials, a dedicated git worktree, and its own workspace, so your agents can genuinely run in parallel without stepping on each other. Y

You define agent roles like "Security Auditor" or "QA Tester" via templates, and Scion handles spinning them up locally, on remote VMs, or across Kubernetes clusters.

What's particularly interesting is its coordination philosophy: rather than prescribing rigid orchestration patterns, agents dynamically learn a shared CLI tool and decide themselves how to coordinate.

It's early and experimental, but local mode is stable enough to start playing with today.

Key Highlights:

Groves as project namespaces: Each project gets a

.sciondirectory (called a Grove) that holds all agent config, templates, and state, keeping everything scoped cleanly to your repo.Layered agent state tracking: Scion tracks agents across three dimensions - lifecycle phase, cognitive activity (thinking, executing, waiting), and freeform detail, so you can actually tell whether an agent is stuck or just thinking.

Attach / Detach Workflow: Agents run in tmux sessions; you can attach for human-in-the-loop interaction, queue messages while detached, and tunnel into remote agents securely.

Please note that it’s not an officially supported Google product.

Quick Bites

OpenClaw + Ollama + Gemma 4 26B for local agentic setup

Google's Gemma 4 dropped last week, and the 26B MoE variant is absurdly capable for its size. You can now pair it with OpenClaw for a fully local AI agent setup in about three steps and a few minutes: install Ollama, pull the model, and launch OpenClaw with Gemma as the backend. Local-first agentic AI that doesn't phone home - the lobster approves. 🦞

AWS just turned S3 into a file system

Amazon S3 now doubles as a file system. S3 Files lets any compute instance, container, or Lambda function mount an S3 bucket with full POSIX-style semantics. Reads hit a local cache for speed, writes translate to efficient S3 API calls behind the scenes. If you've ever cursed at a data staging pipeline just to get file-based tooling working on your S3 data lake, this one's for you.

Karpathy’s wiki builder as a native Skill in Hermes Agent

Nous Research just shipped Karpathy's LLM-Wiki pattern as a native Hermes Agent skill. Your self-improving agent can now spin up persistent markdown knowledge bases that compile, cross-reference, and lint themselves over time. Hermes already dogfooded it by studying the web, code, and Nous' own papers to build a full research vault around their projects. hermes update, type /llm-wiki <research x>, and let the agent do the librarian work.

Z.ai GLM 5.1 is Opu 4.6-level at 1/3rd cost

Z.ai dropped GLM-5.1, a post-training refinement of their 744B MoE model that claims 94.6% of Claude Opus 4.6's coding performance at 1/3rd the cost. It’s trained entirely on 100K Huawei Ascend chips with zero Nvidia silicon in the mix (dangg). The model can stay on a single coding task for up to eight hours, handling planning through testing autonomously. Also scores 58.4 on SWE-Bench Pro, ahead of GPT-5.4 and Gemini 3.1 Pro. Weights are out under MIT license.

Tools of the Trade

AutoCLI: A blazing fast, memory-safe CLI tool. Fetch information from any website with a single command. Covers X, Reddit, YouTube, HackerNews, Bilibili, Zhihu, Xiaohongshu, and 55+ sites, with support for controlling Electron desktop apps, integrating local CLI tools (gh, docker, kubectl).

Clicky: An open-source macOS app that puts an AI teacher right next to your cursor. It can see your screen, listen to you via push-to-talk, talk back with TTS, and even point at specific UI elements as it explains things. Runs on Claude, AssemblyAI, and ElevenLabs behind a Cloudflare Worker proxy.

Freestyle: A sandbox infrastructure platform that gives coding agents full Linux VMs, not containers, with sub-second startup, live forking, and pause/resume so you only pay when things are actually running.

Awesome LLM Apps - A curated collection of LLM apps with RAG, AI Agents, multi-agent teams, MCP, voice agents, and more. The apps use models from OpenAI, Anthropic, Google, and open-source models like DeepSeek, Qwen, and Llama that you can run locally on your computer.

(Now accepting GitHub sponsorships)

Hot Takes

Magical OpenClaw experiences that use frontier models cost $300-1,000/day today, heading to $10,000/day and more. The future shape of the entire technology industry will be how to drive that to $20/month.

The permanent underclass began today

Claude Mythos won't be available to the public, but only billion dollar companies, governments, researchers, ...

~ Lisan al Gaib

That’s all for today! See you tomorrow with more such AI-filled content.

Don’t forget to share this newsletter on your social channels and tag Unwind AI to support us!

PS: We curate this AI newsletter every day for FREE, your support is what keeps us going. If you find value in what you read, share it with at least one, two (or 20) of your friends 😉

Reply