- unwind ai

- Posts

- Control Claude Code from your Phone using Telegram

Control Claude Code from your Phone using Telegram

+ Using Karpathy's autoresearch on Claude Skills

Today’s top AI Highlights:

& so much more!

Read time: 3 mins

AI Tutorial

Six AI agents run my entire life while I sleep.

Not a demo. Not a weekend project.

A real team that works 24/7, making sure I'm never behind. Research done. Content drafted. Code reviewed. Newsletter ready. By the time I open Telegram in the morning, they've already put in a full shift.

By the end of this, you will understand exactly how to build an autonomous AI agent team that runs while you sleep.

We share hands-on tutorials like this every week, designed to help you stay ahead in the world of AI. If you're serious about leveling up your AI skills and staying ahead of the curve, subscribe now and be the first to access our latest tutorials.

Latest Developments

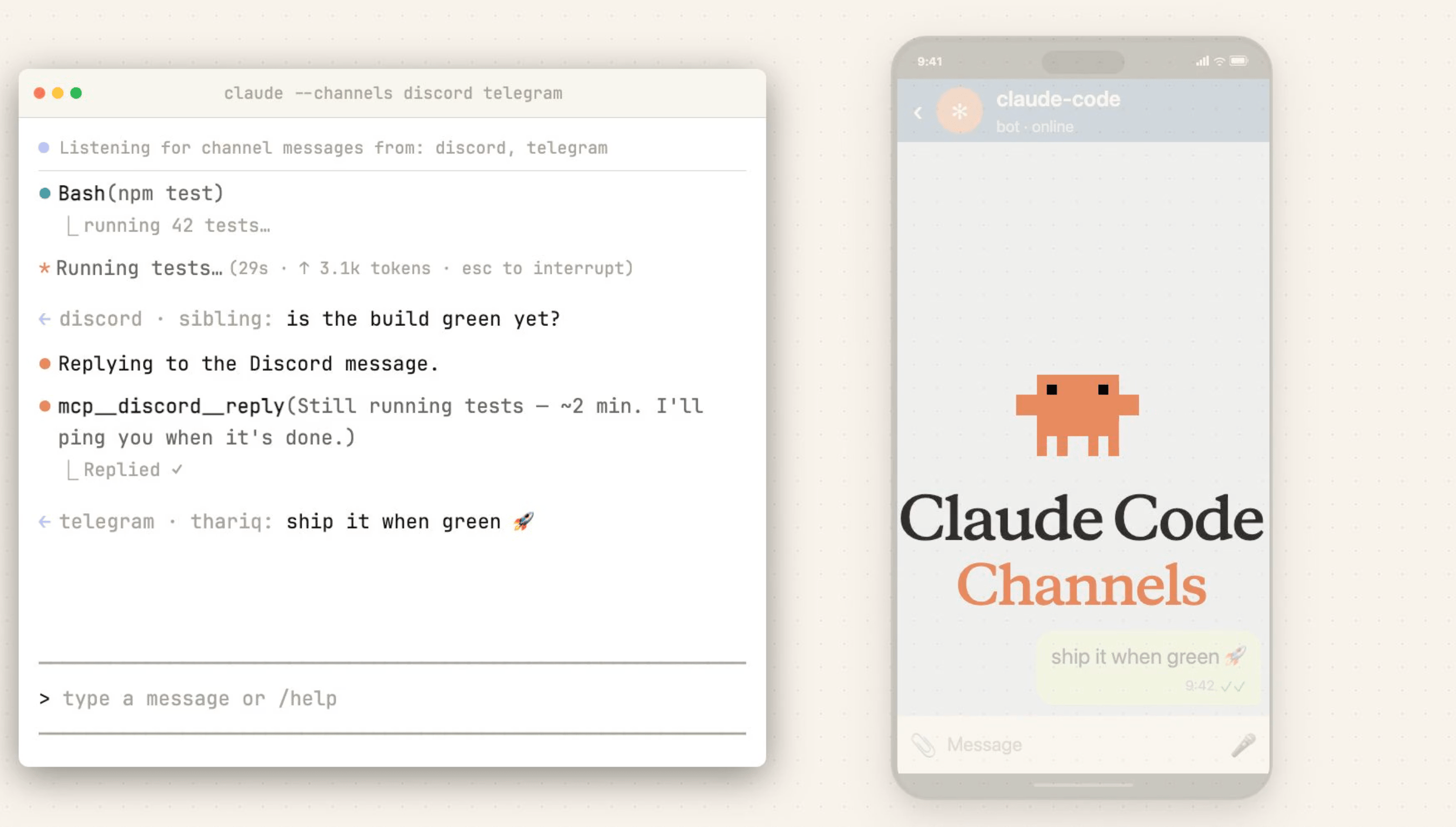

Your phone is now your Claude Code command center (officially).

With the new Claude Code Channels feature, you can now message your running Claude Code session directly from Telegram or Discord.

Built on MCP, Channels works as a two-way bridge: you send a message from your phone, the MCP server pushes it into your active session, Claude does the work using your full local environment, and replies back through the same chat.

It's currently in research preview (requires Claude Code v2.1.80+) and ships with official plugins for both platforms, with community-built connectors for other platforms possible thanks to the open plugin architecture.

Key Highlights:

Two-Way MCP Bridge - Channels aren't just notifications. Claude can reply, react with emoji, edit its own messages, and on Discord, even fetch channel history and download attachments.

Quick Setup - Create a bot via BotFather (Telegram) or the Discord Developer Portal, install the plugin with

/plugin install, configure your token, and launch withclaude --channels. Pairing takes a single DM.Built-In Access Control - Every channel plugin maintains a sender allowlist with pairing-code verification, so only approved user IDs can push messages into your session. Strangers get silently dropped.

Extensible by Design - Telegram and Discord are the starting two, but the Channels protocol is open. Developers can build their own channel plugins for any platform, and there's a

fakechatdemo for testing the flow locally first.

Stripe, Ramp, and Coinbase all quietly built the same thing - internal coding agents that live in Slack, pick up tickets, write code in sandboxes, and open PRs without a human touching a terminal.

LangChain just open-sourced that exact playbook.

Open SWE is a new framework, built on LangGraph and their Deep Agents library, that gives any engineering team the architecture behind those internal agents: isolated cloud sandboxes, subagent orchestration, middleware hooks, and native Slack/Linear/GitHub integration, all MIT-licensed and ready to customize.

Key Highlights:

Sandboxed - Every task runs in its own remote Linux environment with full shell access and zero production exposure, with pluggable backends including Modal, Daytona, Runloop, and LangSmith.

Curated Toolset - Ships with ~15 focused tools (shell execution, PR creation, Slack/Linear replies, subagent spawning) following Stripe's principle that tool curation matters more than tool quantity.

Live Message Injection - Middleware intercepts follow-up Slack or Linear messages mid-run and feeds them to the agent before its next step, so you can steer the agent while it's working.

Context from AGENTS.md - Drop an

AGENTS.mdfile in your repo root to inject org-specific conventions, testing rules, and architectural decisions into every agent run automatically.

Andrej Karpathy's autoresearch was built to optimize ML training code overnight.

Now someone's applied the same loop to optimizing Claude skills, and the results are worth paying attention to.

Ole Lehmann built an autoresearch-style skill that lets an AI agent test, score, and refine any Claude skill on autopilot, using a simple yes/no checklist as its evaluation metric. You point it at a skill, define what "good" looks like in 3-6 binary questions, and walk away. The agent makes one small change, runs the skill, scores the output, keeps or reverts, and loops. His landing page copy skill went from a 56% pass rate to 92% in just 4 rounds, with zero manual intervention.

Key Highlights:

Checklist-as-metric - Instead of vague quality ratings, you define 3-6 yes/no questions (e.g., "Does the headline include a specific number?") that the agent uses to score every output consistently and decide whether changes helped or hurt.

One change at a time - The agent isolates a single variable per round, tests it across multiple runs, and only keeps changes that improve the overall score, catching edits that look good in isolation but hurt the output as a whole.

Works beyond prompts - The same loop has been applied to page load optimization (1100ms → 67ms), cold outreach copy, and newsletter intros - anything with a measurable output is fair game.

Full audit trail - Every run produces a changelog explaining what was tried, why, and whether it worked, giving you (or a future smarter model) a complete optimization history to build on.

Quick Bites

Google’s new Stitch tanked Figma's stock

Google just redesigned its text-to-UI tool Stitch into a full AI-native design canvas. You describe what you want (or just talk to it), and it generates high-fidelity UI, turns it into clickable prototypes, and exports code you can actually ship. It's free, it plugs into coding agents like Claude Code and Cursor via MCP, and Figma's stock dropped 8% the day it launched.

Cursor Composer 2 matches Claude Opus 4.6 at 1/10th the price

Cursor just dropped Composer 2, a code-only model fine-tuned on Moonshot AI's open-weight Kimi K2.5, priced aggressively cheap at $0.50/M input tokens (a tenth of Opus 4.6) and competitive on Terminal-Bench 2.0 and SWE-bench Multilingual. The technically interesting bit is "compaction-in-the-loop RL." The model learns to compress its own context to ~1,000 tokens mid-task, letting it handle coding sessions spanning hundreds of actions without losing the thread. It is now available even on the free plan with generous limits.

SWE-bench scores don't survive real code review

New research from METR puts a number on what many devs already suspect: passing tests ≠ shipping code. METR had real repo maintainers review ~300 AI-generated PRs that passed SWE-bench Verified's automated grader, and roughly half wouldn't actually get merged. The gap comes down to code quality issues, broken surrounding code, and even core functionality failures the test suite missed. Worth reading if you're calibrating how much weight to put on benchmark scores when judging agent readiness.

Schedule recurring cloud-based tasks on Claude Code

Claude Code now supports scheduled, recurring tasks that run in the cloud. No need to keep your local machine awake. Point it at a repo, set a schedule and a prompt, and let it handle things like sweeping open PRs, building features from approved issues, analyzing CI failures overnight, or syncing docs after merges. It also picks up any MCPs you've connected via claude.ai, so your existing tool integrations carry over.

Tools of the Trade

T3 Code - Open-source desktop app and web tool that provides a polished multi-threaded interface on top of existing coding agent CLIs - currently Codex. TUI-based agents are powerful but terrible for managing parallel work, pasting images, and navigating threads, so T3 Code handles the UI while letting each lab's official harness do the actual agent work.

Browserbase CLI Skill - Teaches AI coding agents (like Claude Code) how to manage Browserbase infrastructure from the CLI, like Functions deployment, session management, contexts, extensions, and page fetching.

Colab MCP Server - Gives any MCP-compatible AI agent direct programmatic control over Google Colab notebooks to create cells, run code, and generate visualizations, all from your CLI. Think of it as Colab becoming a headless cloud runtime that agents can treat as their own execution environment.

Awesome LLM Apps - A curated collection of LLM apps with RAG, AI Agents, multi-agent teams, MCP, voice agents, and more. The apps use models from OpenAI, Anthropic, Google, and open-source models like DeepSeek, Qwen, and Llama that you can run locally on your computer.

(Now accepting GitHub sponsorships)

Hot Takes

I paused the company credit card used for Anthropic.

Every employee that didn’t complain that Claude was down I fired.

"Anything made before 2028 is going to be valuable."

— An OpenAI employee implicitly discloses their timetable

That’s all for today! See you tomorrow with more such AI-filled content.

Don’t forget to share this newsletter on your social channels and tag Unwind AI to support us!

PS: We curate this AI newsletter every day for FREE, your support is what keeps us going. If you find value in what you read, share it with at least one, two (or 20) of your friends 😉

Reply