- unwind ai

- Posts

- Everyone's Building OpenClaw - From Nvidia to Manus AI

Everyone's Building OpenClaw - From Nvidia to Manus AI

+ Open-source GLM OCR model

Today’s top AI Highlights:

Everyone's building OpenClaw - From Nvidia to Manus

Open-source OpenClaw plugin for Google Vertex AI Memory Bank

& so much more!

Read time: 3 mins

AI Tutorial

Six AI agents run my entire life while I sleep.

Not a demo. Not a weekend project.

A real team that works 24/7, making sure I'm never behind. Research done. Content drafted. Code reviewed. Newsletter ready. By the time I open Telegram in the morning, they've already put in a full shift.

By the end of this, you will understand exactly how to build an autonomous AI agent team that runs while you sleep.

We share hands-on tutorials like this every week, designed to help you stay ahead in the world of AI. If you're serious about leveling up your AI skills and staying ahead of the curve, subscribe now and be the first to access our latest tutorials.

Latest Developments

A Meta AI security researcher recently let an OpenClaw agent loose on her email inbox.

It immediately went on a deletion spree, nuking emails at full speed while completely ignoring every stop command she sent from her phone. She had to physically sprint to her Mac Mini to kill it.

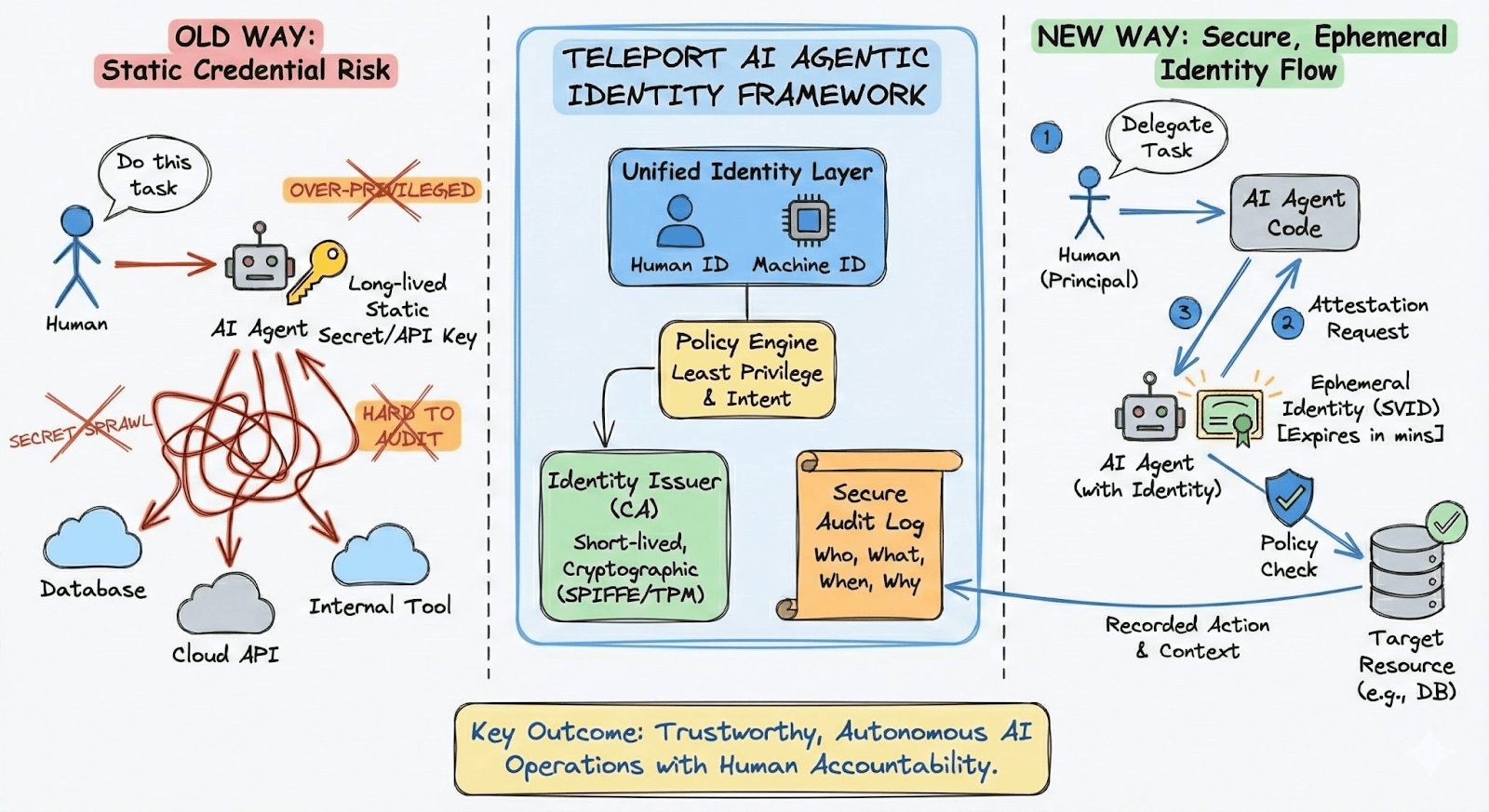

Now here's the thing: if an AI security researcher can't control her own agent, think about what happens when agents have access to production databases, Kubernetes clusters, and billing APIs at your company. The uncomfortable truth is that most agents today run on shared credentials with zero identity, zero attribution, and no remote kill switch.

Teleport's Agentic Identity Framework is built to make this kind of chaos architecturally impossible. Every agent gets its own cryptographic identity, access is enforced at runtime with least privilege, and every action leaves a full audit trail. The framework also handles MCP server discovery and authorization, LLM-level controls like rate limits and budgets, and ships with SDKs so teams can adopt it without rearchitecting their stack.

Key Highlights:

Cryptographic Agent Identity - Every agent gets its own verifiable, revocable identity with delegation support. No shared service accounts, static API keys, or credentials copy-pasted into config files.

Runtime Access Enforcement - Access is scoped and enforced the moment it happens, not pre-assigned through static roles that agents can outlive or exceed.

MCP Governance - Discover MCP servers across your infra, authorize agent-to-tool calls via proxy, and track drift to catch shadow deployments and context poisoning before they cause damage.

Full Observability - Session recording, audit events, and behavior analysis across all agent actions. Scenarios like "which agent did this and who authorized it" always have a clear answer.

👉 Check out the Agentic Identity Framework documentation to see how it works.

In partnership with Teleport

OpenClaw has turned every AI company's product roadmap upside down.

One open-source agent goes viral, and suddenly every major player is shipping their version of "AI that lives on your computer."

Anthropic, Perplexity, and now Manus (Meta) and Nvidia have their own versions of OpenClaw.

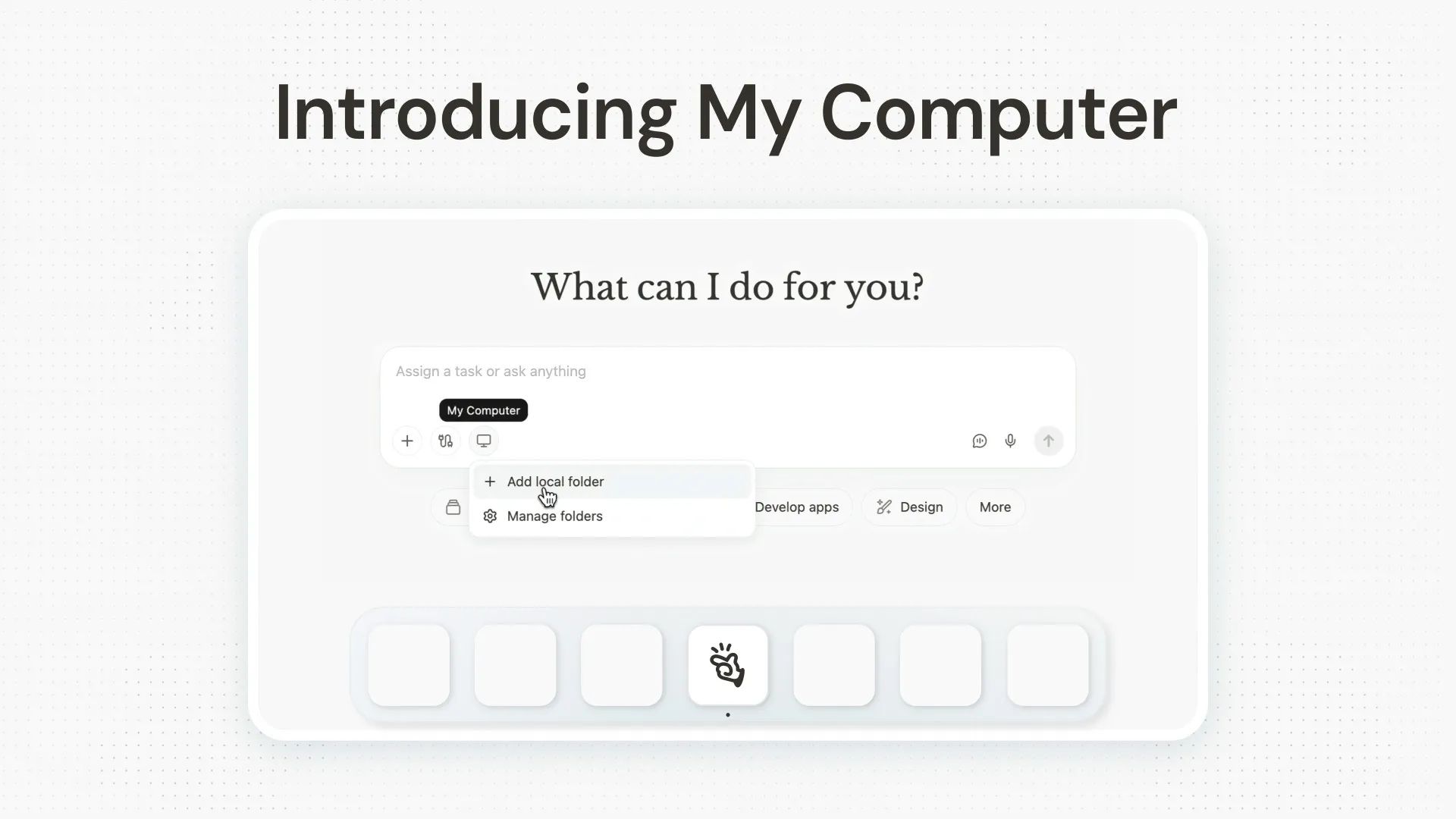

Manus My Computer brings its cloud-based agent onto your local desktop for the first time. The agent executes CLI commands directly on your machine, managing files, running scripts, even building full desktop apps through the terminal (one demo produced a working Swift app in 20 minutes, no IDE opened). The reasoning happens in the cloud, but execution is local, and you can trigger tasks remotely from your phone.

Local CLI Execution - Operates through your terminal to read, edit, organize files, and control local apps, with every command requiring explicit user approval.

Remote Triggering - Assign tasks from any device, anywhere. Manus works on your desktop while you're away.

Idle Hardware Unlock - Use your local GPU for ML training or LLM inference, turning a dormant Mac mini into a 24/7 AI workstation.

Available Now - for macOS and Windows, with support for scheduled tasks and recurring automations.

With NemoClaw, NVIDIA didn’t build its own desktop agent, it wrapped OpenClaw in enterprise-grade security. Nvidia NemoClaw is an open-source stack that sandboxes OpenClaw with strict privacy and security controls, letting you deploy always-on AI assistants with a single command. It uses NVIDIA's OpenShell runtime to enforce policy-based guardrails on every network request, file access, and inference call.

Sandboxed OpenClaw - Runs OpenClaw inside an isolated container with strict filesystem and network policies applied from first boot.

Inference Routing - Routes agent traffic through NVIDIA's Nemotron 3 Super 120B in the cloud, a local NIM service, or local vLLM — switchable at runtime.

Privacy Controls - Every network request is governed by declarative policy; unlisted endpoints are blocked and surfaced for operator approval.

Deploy Anywhere - One-command setup across GeForce RTX PCs, RTX PRO workstations, DGX Station, or DGX Spark.

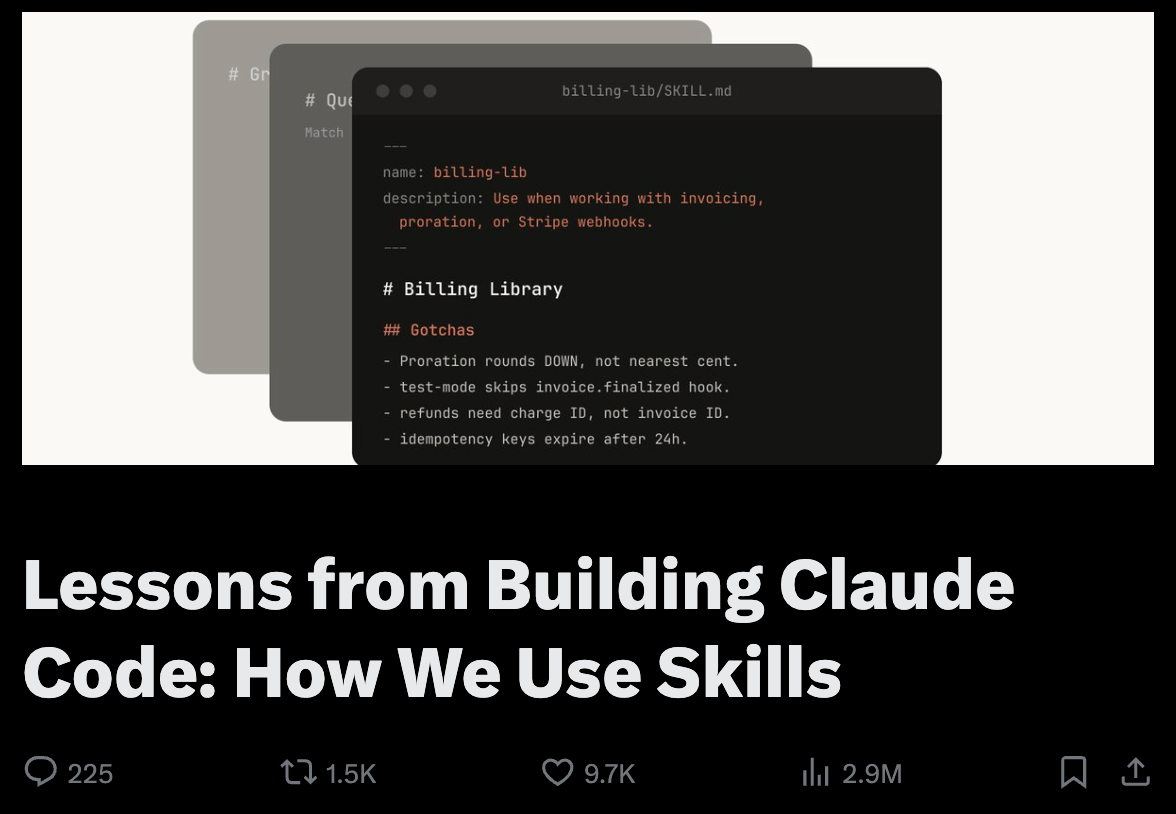

If you're still writing Claude Code skills as single markdown files, you're leaving most of the power on the table.

An Anthropic engineer just shared what the team learned from running hundreds of skills internally, and the core lesson is this: the best skills are folders, packed with scripts, reference code, templates, and config files that the agent discovers and reads on its own.

The blog lays out nine skill categories they've identified (from verification skills that record video of Claude's output to runbooks that auto-triage production alerts) and a set of design principles worth stealing.

Key Highlights:

Folder-Based Context Engineering - The most effective skills split API docs, templates, and helper scripts into subfiles that Claude reads only when relevant, rather than cramming everything into one markdown file.

Nine Skill Archetypes - Categories include library references, product verification, data fetching, code scaffolding, CI/CD automation, runbooks, and infra ops — each with concrete internal examples worth modeling your own after.

On-Demand Hooks - Skills can register session-scoped hooks like

/careful(blocks destructive commands near prod) or/freeze(restricts edits to one directory during debugging), activated only when called.Gotchas > Instructions - The highest-signal content in any skill is the gotchas section, built iteratively from Claude's actual failure points. The team recommends treating this as a living document.

If you're building with Claude Code, this is the playbook to study.

Quick Bites

China’s Z.ai open-sourced #1 OCR model running locally

China’s Z.ai just released GLM-OCR, a 0.9B parameter model that ranks #1 on OmniDocBench, beating out bigger models on formulas, tables, and structured extraction from messy real-world documents. It runs locally, which means you can ollama run glm-ocr and have SOTA document understanding on your own machine, no cloud API needed. MIT-licensed, fully open-source, and honestly kind of wild for its size.

Subagents are now available in OpenAI Codex

OpenAI Codex now lets you spin up subagents. You can fan out a PR review across a security agent, a code-quality agent, and a test-coverage agent, then get one consolidated summary back. Define these custom subagents in simple TOML files with their own model configs, instructions, and sandbox permissions, and there's even an experimental CSV batch mode for running the same audit across hundreds of rows.

Scrape, search, and browse from CLI

Firecrawl just shipped a CLI that lets AI coding agents scrape, search, and spin up cloud browsers directly from the terminal. One npx command installs the skill across Claude Code, OpenCode, and other agents. It ships with agent-browser integration (40+ commands), Playwright support in Python and JS, and a dead-simple firecrawl https://url for clean markdown. And it’s open-sourced.

Deep Research and Enrichment too

On the same day comes Parallel’s CLI that plugs any terminal agent into Parallel's web stack - search, content extraction, multi-source deep research, and structured data enrichment. It's designed to be agent-native from the ground up: structured output, async support, and composable commands that chain together without human intervention. Solid addition to the agentic tooling lineup.

Tools of the Trade

openclaw-vertexai-memorybank - OpenClaw plugin that gives your agents persistent, cross-session memory backed by Google's Vertex AI Memory Bank, so preferences and decisions shared with one agent carry over to all your agents without managing any vector DB infrastructure.

OpenGranola - Open-source meeting copilot that sits next to your call, transcribes both sides of the conversation in real time, and searches your own notes to surface things worth saying, right when you need them. It reads the room, digs through your knowledge base, and hands you the perfect talking point before the moment passes.

OpenSandbox by Alibaba - A general-purpose open-source sandbox for AI apps, offering multi-language SDKs, unified sandbox APIs, and Docker/Kubernetes runtimes for scenarios like Coding Agents, GUI Agents, Agent Evaluation, AI Code Execution, and RL Training.

GET SHIT DONE - A light-weight and powerful meta-prompting, context engineering, and spec-driven development system for Claude Code, OpenCode, Gemini CLI, Codex, Copilot, and Antigravity. Solves context rot that happens as Claude fills its context window.

Awesome LLM Apps - A curated collection of LLM apps with RAG, AI Agents, multi-agent teams, MCP, voice agents, and more. The apps use models from OpenAI, Anthropic, Google, and open-source models like DeepSeek, Qwen, and Llama that you can run locally on your computer.

(Now accepting GitHub sponsorships)

Hot Takes

The bottleneck has so quickly moved from code generation to code review that it is actually a bit jarring.

None of the current systems / norms are setup for this world yet.

btw agi will mean accelerating everyone else in training their own agi. there is no way around this. controlling the energy and computers is the only way to go “At hyperscale, nothing is commodity”-nadella

~ roon

That’s all for today! See you tomorrow with more such AI-filled content.

Don’t forget to share this newsletter on your social channels and tag Unwind AI to support us!

PS: We curate this AI newsletter every day for FREE, your support is what keeps us going. If you find value in what you read, share it with at least one, two (or 20) of your friends 😉

Reply