- unwind ai

- Posts

- Git Clone an Entire AI Agency with 120+ Agents

Git Clone an Entire AI Agency with 120+ Agents

+ 1M context for Claude Opus 4.6 and Sonnet 4.6

Today’s top AI Highlights:

& so much more!

Read time: 3 mins

AI Tutorial

Six AI agents run my entire life while I sleep.

Not a demo. Not a weekend project.

A real team that works 24/7, making sure I'm never behind. Research done. Content drafted. Code reviewed. Newsletter ready. By the time I open Telegram in the morning, they've already put in a full shift.

By the end of this, you will understand exactly how to build an autonomous AI agent team that runs while you sleep.

We share hands-on tutorials like this every week, designed to help you stay ahead in the world of AI. If you're serious about leveling up your AI skills and staying ahead of the curve, subscribe now and be the first to access our latest tutorials.

Latest Developments

Someone open-sourced an entire AI agency with 120 specialized agents across 12 divisions, each with its own personality, workflows, and deliverables.

The Agency is a collection of meticulously crafted AI agent profiles that you can drop into Claude Code, Cursor, Aider, Windsurf, Gemini CLI, or GitHub Copilot and immediately start working with domain-specific specialists.

The repo organizes agents into divisions - engineering, design, marketing, sales, QA, game development, and more, where each agent comes with a defined identity, communication style, success metrics, and real code examples. It's already at 31k stars on GitHub.

Key Highlights:

Deep Specialization — Each of the 120 agents goes well beyond generic prompts, packing in domain expertise, personality traits, workflow processes, and concrete deliverables with code examples.

Multi-Tool Support — Ships with conversion and install scripts for Claude Code, GitHub Copilot, Cursor, Aider, Windsurf, Gemini CLI, Antigravity, and OpenCode — one

install.shcommand auto-detects your tools.Full Agency Coverage — Spans 12 divisions including frontend/backend engineering, DevOps, mobile, security, paid media, sales, spatial computing, and game development (Unity, Unreal, Godot, Roblox).

Plug-and-Play Architecture — Copy agents to your tool's config directory and activate by name — no setup overhead, fully forkable and customizable under MIT license.

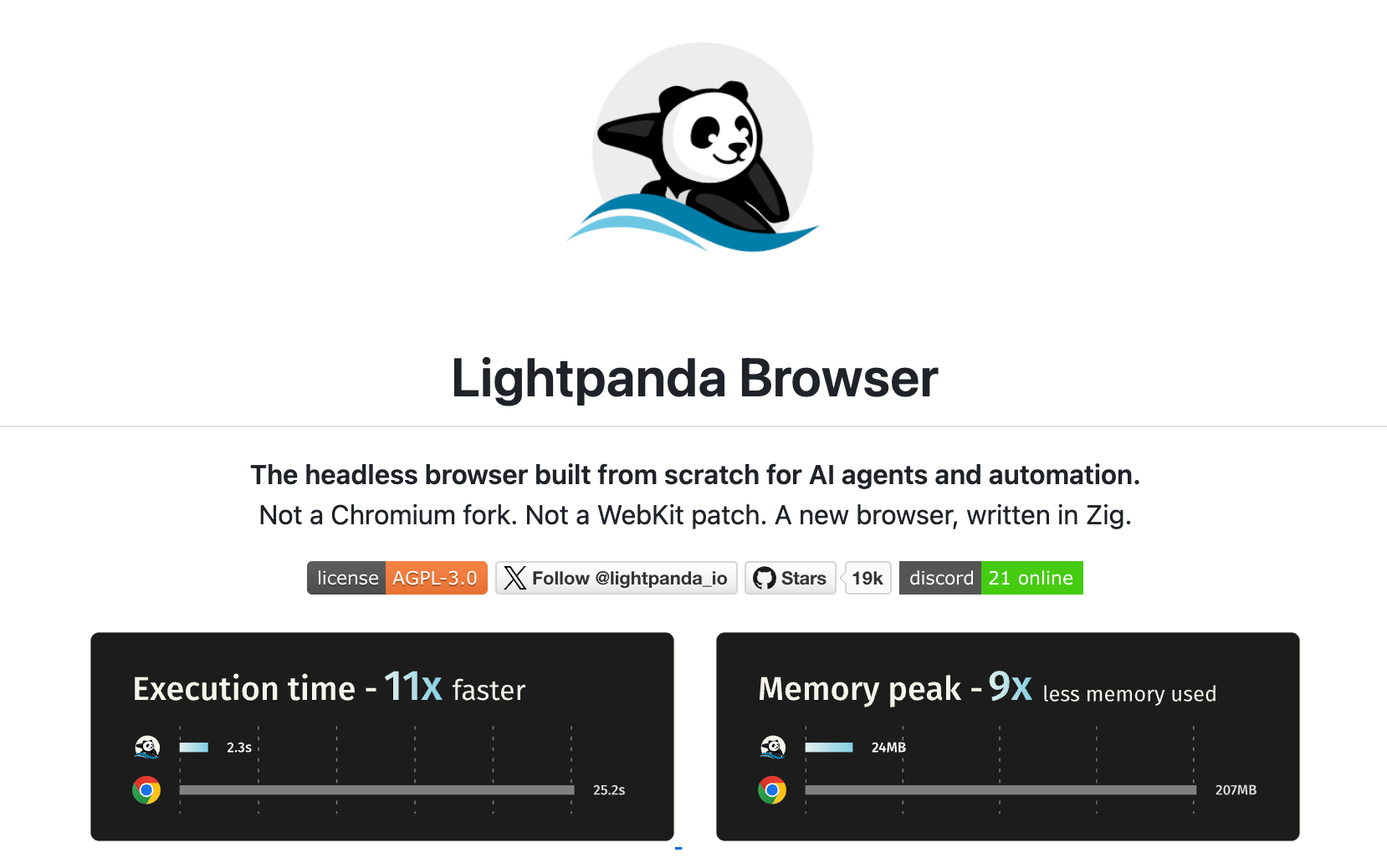

Every AI agent that touches the web runs Chrome headless under the hood. It works, but it's a resource hog nobody questioned.

Lightpanda did question it. Built from scratch in Zig (not another Chromium fork), this open-source headless browser is 11x faster and uses 9x less memory than Chrome. It speaks Playwright, Puppeteer, and CDP out of the box, so your existing agent code works without changes.

The project is currently in beta with nightly builds available for Linux and macOS, plus official Docker images.

Key Highlights:

Performance – In benchmarks running Puppeteer across 100 pages on an EC2 m5.large instance, Lightpanda completed in 2.3s using 24MB of memory versus Chrome's 25.2s and 207MB.

Built from Zero – Written entirely in Zig with a V8 JavaScript engine, it's not based on Chromium, Blink, or WebKit. No rendering overhead, just headless execution.

Drop-in Compatibility – Supports CDP natively, so existing Puppeteer and Playwright scripts work by swapping the browser endpoint — no code changes to your automation logic.

Self-Host or Cloud – Lightpanda also offers a hosted cloud service where you connect via a WebSocket endpoint, letting you skip local browser management entirely.

Andrey Breslav gave seven million developers Kotlin.

Now he wants them to stop writing code altogether. His new project CodeSpeak is a programming language where you maintain structured English specifications instead of source code, and LLMs handle the implementation.

It's not prompting and it's not vibe coding; it's closer to a higher-level language that happens to understand English and uses an LLM as its compiler. On open-source projects like yt-dlp and BeautifulSoup4, specs came in at 5–10x smaller than the code they replaced, with more passing tests after migration.

CodeSpeak ships with dependency tracking, modular spec imports, and strict scoping controls for what the LLM can touch. Currently in alpha, it's BYOK with the Anthropic API

Key Highlights:

Spec-Driven - You write concise

.cs.mdspec files describing what the code should do, and CodeSpeak generates, tests, and maintains the implementation. Specs can import each other for modular builds with automatic dependency resolution.Mixed Mode - Drop CodeSpeak into an existing project and it only touches files you explicitly assign to it. You dont need to rewrite to start using it.

Scope Awareness - The build system tracks which files each spec owns and warns you when an LLM-generated change spills outside that boundary, with configurable strictness levels.

Takeover Feature (Coming Soon) - CodeSpeak will be able to extract specs from existing code, letting teams gradually migrate from maintaining code to maintaining specs.

Quick Bites

1M context now GA for Claude Opus 4.6 and Sonnet 4.6

Claude Opus 4.6 and Sonnet 4.6 now ship with the full 1M token context window at standard per-token pricing. Media limits jump to 600 images/PDF pages per request, and Claude Code users on Max, Team, and Enterprise get 1M context by default, which means fewer compactions and longer sessions that actually remember what happened at the start.

R2D3: A visual intro to Machine Learning

If you've never seen R2D3's interactive explainer on machine learning, it's worth bookmarking. It walks you through decision trees — from splitting data on elevation and price to overfitting on training data — using gorgeous D3.js visualizations that make the concepts click instantly. A genuinely fun refresher even if you already know the material inside out.

Chrome DevTools MCP for live debugging

Chrome DevTools now has an MCP server that lets coding agents connect directly to your active browser session. That means your agent can inspect a failing network request or a broken element you've selected in DevTools, without needing to spin up a separate browser instance or re-authenticate. Available in Chrome M144 (Beta) with an --autoConnect flag.

Claude Code ships Auto Mode

Anthropic is rolling out Auto Mode for Claude Code, a research preview that lets the agent decide which actions need your approval and which ones can proceed on their own. It’s a sweet spot getting prompted on every file write, and the sketchy --dangerously-skip-permissions. Enterprise admins can disable it via MDM or registry, and Anthropic still recommends running it in sandboxed environments for now.

Z.ai drops high-speed GLM-5-Turbo for OpenClaw

Z.ai just dropped GLM-5-Turbo, a speed-optimized variant of their 744B MoE flagship, purpose-built for agentic workflows inside OpenClaw. It's been fine-tuned from the training stage up for tool calling, long-chain execution, and persistent tasks, with a 202K context window and API pricing at $0.96/M input, $3.20/M output. Already live on the Z.ai API and OpenRouter.

Tools of the Trade

Agentic Fabriq - A YC-backed identity and permissions layer for AI agents. Basically, Okta but for agents. It ensures agents can only access the data and tools their associated user is actually cleared for, with audit trails across your org.

GitNexus - Indexes any codebase into a knowledge graph that maps every dependency, call chain, and execution flow, then exposes it via MCP so AI coding agents stop shipping blind edits. Works as a CLI with Cursor/Claude Code integration or as a browser-based graph explorer — 9.4k stars and climbing.

Lossless Claw - An OpenClaw plugin that replaces the default sliding-window context truncation with a DAG-based summarization system where nothing is ever lost. Raw messages persist in SQLite, older chunks get recursively summarized, and agents can drill back into any summary to recover full detail.

Awesome LLM Apps - A curated collection of LLM apps with RAG, AI Agents, multi-agent teams, MCP, voice agents, and more. The apps use models from OpenAI, Anthropic, Google, and open-source models like DeepSeek, Qwen, and Llama that you can run locally on your computer.

(Now accepting GitHub sponsorships)

Hot Takes

your edge is whatever you know that the models don't know

~ kepanoIf You're an Entrepreneur: Stop designing businesses for 2024 scarcity. Design for 2030 abundance. Assume intelligence is free, energy is unlimited, and robotic labor costs pennies per hour. What becomes possible that's impossible today?

That’s all for today! See you tomorrow with more such AI-filled content.

Don’t forget to share this newsletter on your social channels and tag Unwind AI to support us!

PS: We curate this AI newsletter every day for FREE, your support is what keeps us going. If you find value in what you read, share it with at least one, two (or 20) of your friends 😉

Reply