- unwind ai

- Posts

- Karpathy Open-Sourced a 24/7 AI Research Lab

Karpathy Open-Sourced a 24/7 AI Research Lab

+ Andrew Ng's Context Hub, Multi-agent Code Reviews in Claude

Today’s top AI Highlights:

& so much more!

Read time: 3 mins

AI Tutorial

Six AI agents run my entire life while I sleep.

Not a demo. Not a weekend project.

A real team that works 24/7, making sure I'm never behind. Research done. Content drafted. Code reviewed. Newsletter ready. By the time I open Telegram in the morning, they've already put in a full shift.

By the end of this, you will understand exactly how to build an autonomous AI agent team that runs while you sleep.

We share hands-on tutorials like this every week, designed to help you stay ahead in the world of AI. If you're serious about leveling up your AI skills and staying ahead of the curve, subscribe now and be the first to access our latest tutorials.

Latest Developments

Here's a problem every coding agent user has hit: you ask your agent to integrate Stripe, and it confidently writes code using API parameters that were deprecated six months ago. Your agent doesn't know what it doesn't know.

Andrew Ng's team at DeepLearning.AI just shipped Context Hub, an open source CLI that solves this. Run chub get stripe/api and you get curated, versioned markdown docs optimized for LLMs. Run chub annotate, and your agents save workarounds as long-term memory across sessions.

This is context engineering in a single CLI. If you've been building with CLAUDE.md files or custom context docs, Context Hub standardizes the whole pattern. Add it to your agent's instructions, and it never hallucinates an outdated endpoint again.

Key Highlights:

Curated doc fetching - Agents search and pull versioned, language-specific API documentation via CLI, replacing hallucinated or outdated API calls with accurate references.

Agent annotations - When your agent discovers a workaround or gap in the docs, it can save notes locally that automatically surface in future sessions.

Community feedback loop - A built-in upvote/downvote system lets agents flag doc quality, feeding improvements back to maintainers over time.

Easy agent integration - Works with any coding agent that can run CLI commands; for Claude Code users, there's a ready-made SKILL.md you can drop into your skills directory.

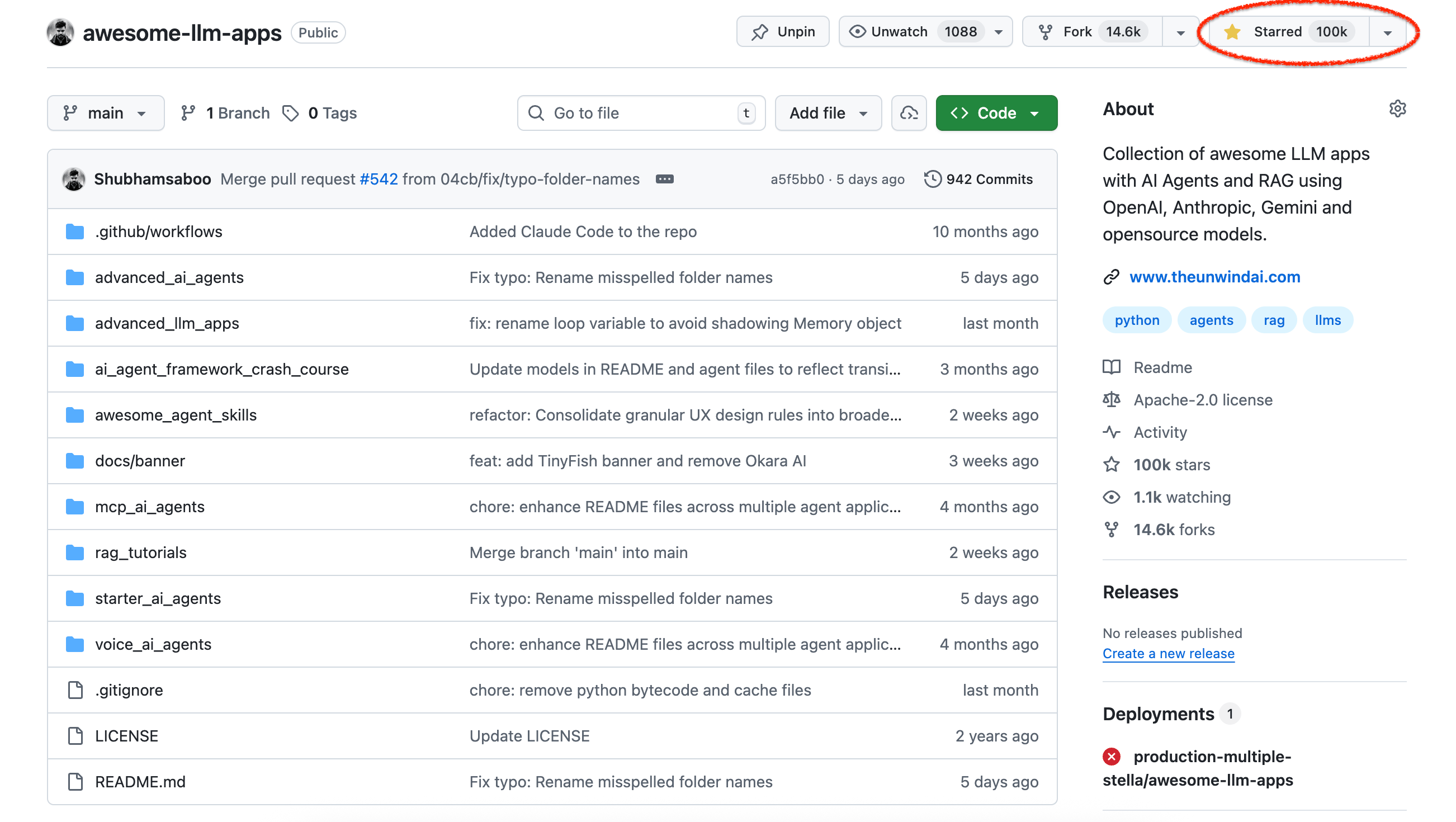

This is wild! Awesome LLM Apps is now among the top 100 most-starred repositories of all time, right up there with projects that practically run the internet.

The repo is a fully open-source, Apache 2.0-licensed collection of hands-on LLM applications - AI agents, multi-agent teams, RAG pipelines, voice agents, MCP tools, and more, each with step-by-step instructions you can clone and run immediately.

100% free, always. You get:

AI agents end-to-end - Starter agents for getting up and running fast, plus advanced builds like browser agents, voice agents, and MCP agents.

Multi-agent teams - Complete implementations of multi-agent orchestration patterns, from simple handoffs to swarm-style coordination.

RAG done right - Covers the full spectrum: basic RAG, agentic RAG, hybrid search, knowledge graph RAG with citations, vision RAG, and dataset routing.

Run anything locally - Every project supports OpenAI, Anthropic, Gemini, xAI, and open-source models like Llama and Qwen, so you can prototype on your laptop and scale to cloud without rewriting code.

Check it out now!

Andrej Karpathy spent two decades manually tuning neural networks, forming hypotheses, running experiments, reading papers, iterating.

Then he watched an AI agent do the same thing 700 times in two days while he wasn't even in the room. 🤯

His new open-source project autoresearch, gives a coding agent a single GPU and a stripped-down LLM training file (~630 lines of Python), then lets it modify the code, train for 5 minutes, check the score, and loop - indefinitely, autonomously, committing every improvement to git.

In its first run on nanochat, the agent found ~20 real optimizations that Karpathy hadn't caught manually, from broken attention scaling to missing regularization. All of them were additive, all transferred to larger models, and stacked together, they pushed the "Time to GPT-2" leaderboard down 11%.

The researcher's job reduces to writing a program.md file that describes the research direction; the agent handles all code modifications, training runs, and result evaluation autonomously.

Key Highlights:

Autonomous experiment loop - The agent reads

program.md, modifies the training code (architecture, hyperparameters, optimizers), runs a fixed 5-minute training cycle, and commits improvements to a git branch automatically.Real results on a tuned codebase - The ~20 improvements the agent discovered were additive and transferred from smaller (depth=12) to larger (depth=24) models, proving these aren't just noise on a toy setup.

Minimal, single-GPU design - The entire codebase fits within an LLM's context window (~630 lines, one file, PyTorch only), making it easy for any coding agent to reason about the full training pipeline holistically.

It’s already spreading btw! Shopify CEO Tobi Lütke adapted autoresearch overnight for an internal project and reported a 19% improvement in validation scores, with the agent-tuned smaller model outperforming a manually configured larger one.

Quick Bites

Anthropic releases multi-agent bug hunters for PRs

Anthropic shipped Code Review for Claude Code, a multi-agent code review system where you open a PR, and a team of agents fans out in parallel to hunt bugs, verify findings, and rank them by severity. Anthropic's been dogfooding it internally where substantive review comments jumped from 16% to 54% of PRs, with less than 1% of findings marked incorrect. Now in research preview for Team and Enterprise plans, billed on token usage at roughly $15–25 per review.

Agents can now get their own credit cards

Slash shipped an MCP server for agentic commerce that lets AI agents create cards, set spend limits, and send payments. Basically, your full business banking stack, accessible via three tool primitives. Security is handled through RSA-OAEP encryption so agents never see raw card numbers, and a human-in-the-loop mode lets agents propose write actions that require manual approval before executing. It’s available now!

LangChain automated its own sales & GTM pipeline

LangChain's sales reps used to spend 15 minutes per lead just toggling between tabs. So they built an agent to do it for them! Their GTM agent watches Salesforce for new leads, runs contact history checks, researches across CRM and web, and delivers drafts to Slack with reasoning attached. It all now results in 250% higher conversion rates and 86% weekly active usage across the team. They published the full build log with architecture decisions, eval pipelines, and a neat per-rep memory system. Definitely one to bookmark!

ICYMI: Anthropic launched a Claude Community Ambassadors program, and applications are open. Ambassadors get event sponsorship, monthly API credits, pre-release feature access, and a private Slack with the Anthropic team — in exchange for organizing local builder events in your city. No developer title required, and it's global with no location restrictions. Apply here.

Tools of the Trade

Paperclip - Open-source tool that orchestrates a team of AI agents to run a business. Bring your own agents, assign goals, and track your agents' work and costs from one dashboard. It looks like a task manager, but under the hood it has org charts, budgets, governance, goal alignment, and agent coordination.

Vercel's agent-browser - A headless browser automation CLI that lets AI agents navigate, screenshot, extract data, and interact with web pages using simple commands. The new Vercel Sandbox skill adds on-demand isolated browser environments, so agents can spin up a sandboxed browser session without any local setup.

Terminal Use - Deploy coding and research agents with sandboxed filesystems. Think Vercel, but for agents. First-class storage decoupled from task lifecycle.

VS Code Agent Kanban - Persistent task memory for AI coding agents using markdown files and YAML frontmatter. Your agent remembers what it was working on across sessions. GitOps-friendly.

Awesome LLM Apps - A curated collection of LLM apps with RAG, AI Agents, multi-agent teams, MCP, voice agents, and more. The apps use models from OpenAI, Anthropic, Google, and open-source models like DeepSeek, Qwen, and Llama that you can run locally on your computer.

(Now accepting GitHub sponsorships)

Hot Takes

A “computer” used to be a job title.

Then a computer became a thing humans used.

Now a computer is becoming a thing computers use.

Not knowing how to code giving you an advantage is absolute nonsense.

The more you understand, the better your prompts, the better the feedback you give, the better product you ship.

What will change is that the intricacies of syntax, compilers, module systems, the finer details of type systems, won’t matter as much to everyone.

But you should absolutely understand how the pieces fit together. From syscall to pixels. Learn how data flows, because you’ll be able to secure your systems. Learn about performance, because you’ll be able to push your agent further. Learn about APIs, because they determine how to integrate systems. Learn about how systems fail, because you’ll be able to make reliable programs.

That’s all for today! See you tomorrow with more such AI-filled content.

Don’t forget to share this newsletter on your social channels and tag Unwind AI to support us!

PS: We curate this AI newsletter every day for FREE, your support is what keeps us going. If you find value in what you read, share it with at least one, two (or 20) of your friends 😉

Reply