- unwind ai

- Posts

- Karpathy's AI Coding Agent Rant in a Claude.md File

Karpathy's AI Coding Agent Rant in a Claude.md File

+ Google's best engineering practices in 20 Skills

Today’s top AI Highlights:

& so much more!

Read time: 3 mins

AI Tutorial

Six AI agents run my entire life while I sleep.

Not a demo. Not a weekend project.

A real team that works 24/7, making sure I'm never behind. Research done. Content drafted. Code reviewed. Newsletter ready. By the time I open Telegram in the morning, they've already put in a full shift.

By the end of this, you will understand exactly how to build an autonomous AI agent team that runs while you sleep.

We share hands-on tutorials like this every week, designed to help you stay ahead in the world of AI. If you're serious about leveling up your AI skills and staying ahead of the curve, subscribe now and be the first to access our latest tutorials.

Latest Developments

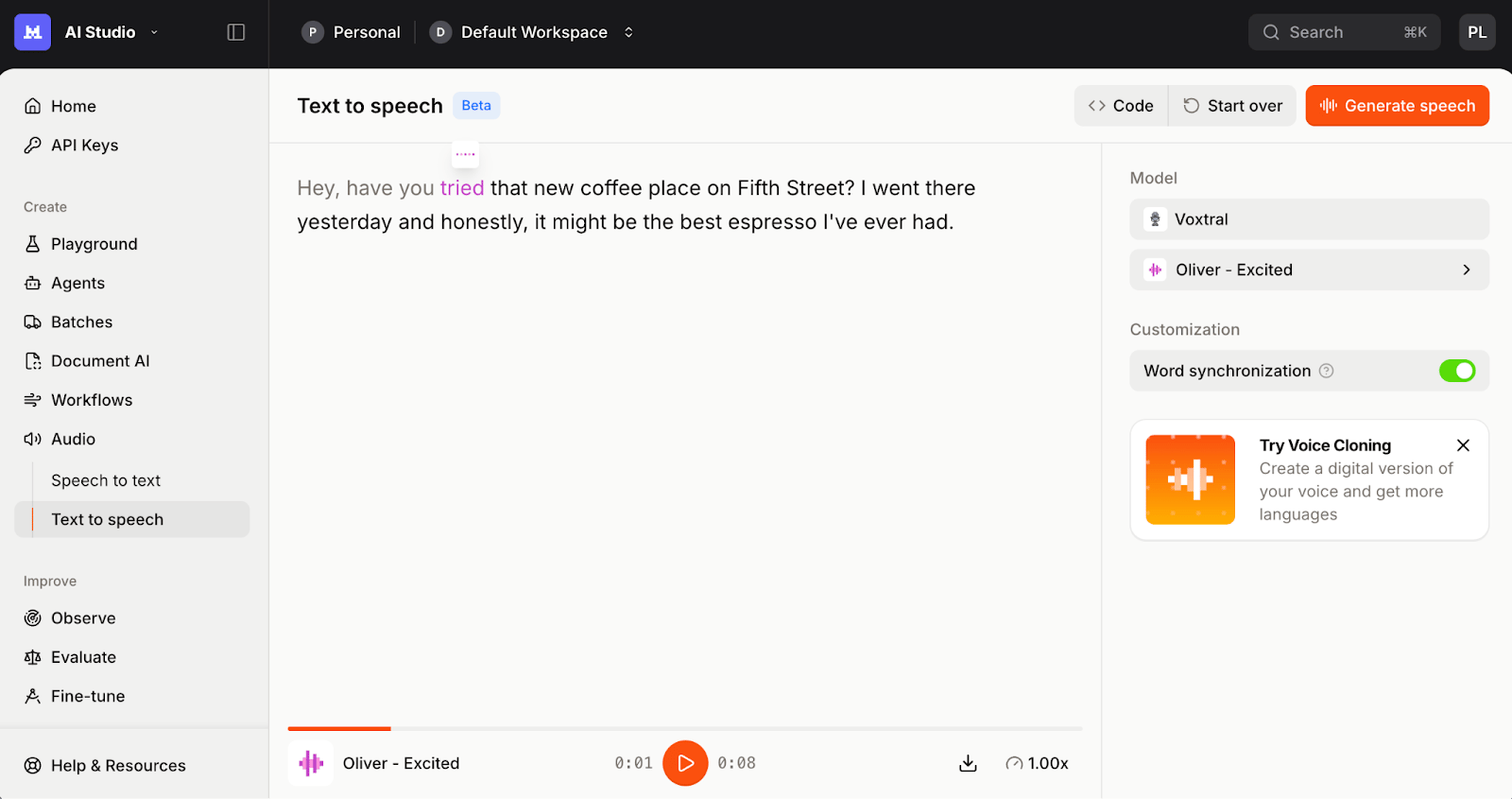

Voxtral TTS is a lightweight 4B parameter text-to-speech model with state-of-the-art performance in multilingual voice generation. It goes beyond reading text aloud, capturing a speaker's personality: natural pauses, rhythm, intonation, and emotional dexterity. Available now via API, Mistral Studio, and open weights on Hugging Face.

Key highlights:

Emotion-aware speech generation - Contextual understanding (neutral, happy, sarcastic, and more) determines whether output sounds natural or robotic. Voxtral TTS models both meaning and personality, not just pronunciation.

Zero-shot custom voice context in 9 languages - Provide a 5-25 second voice prompt and the model adapts to accent, inflection, rhythm, and even disfluencies. Supports English, French, German, Spanish, Dutch, Portuguese, Italian, Hindi, and Arabic.

Cross-lingual voice adaptation - Generate speech in one language using a voice prompt in another. A French voice prompt with English text produces natural French-accented English, useful for cascaded speech-to-speech translation.

70ms model latency, ~9.7x real-time factor - Built for voice agent applications. Streams natively and integrates into any existing STT and LLM stack.

Beats ElevenLabs Flash v2.5 on naturalness - Human evaluations show Voxtral TTS wins on flagship voices (58.3% vs 41.7%) and voice customization (68.4% vs 31.6%).

Google's Addy Osmani just packaged 14+ years of senior engineering judgment into Markdown files, and now your AI coding agent can follow them.

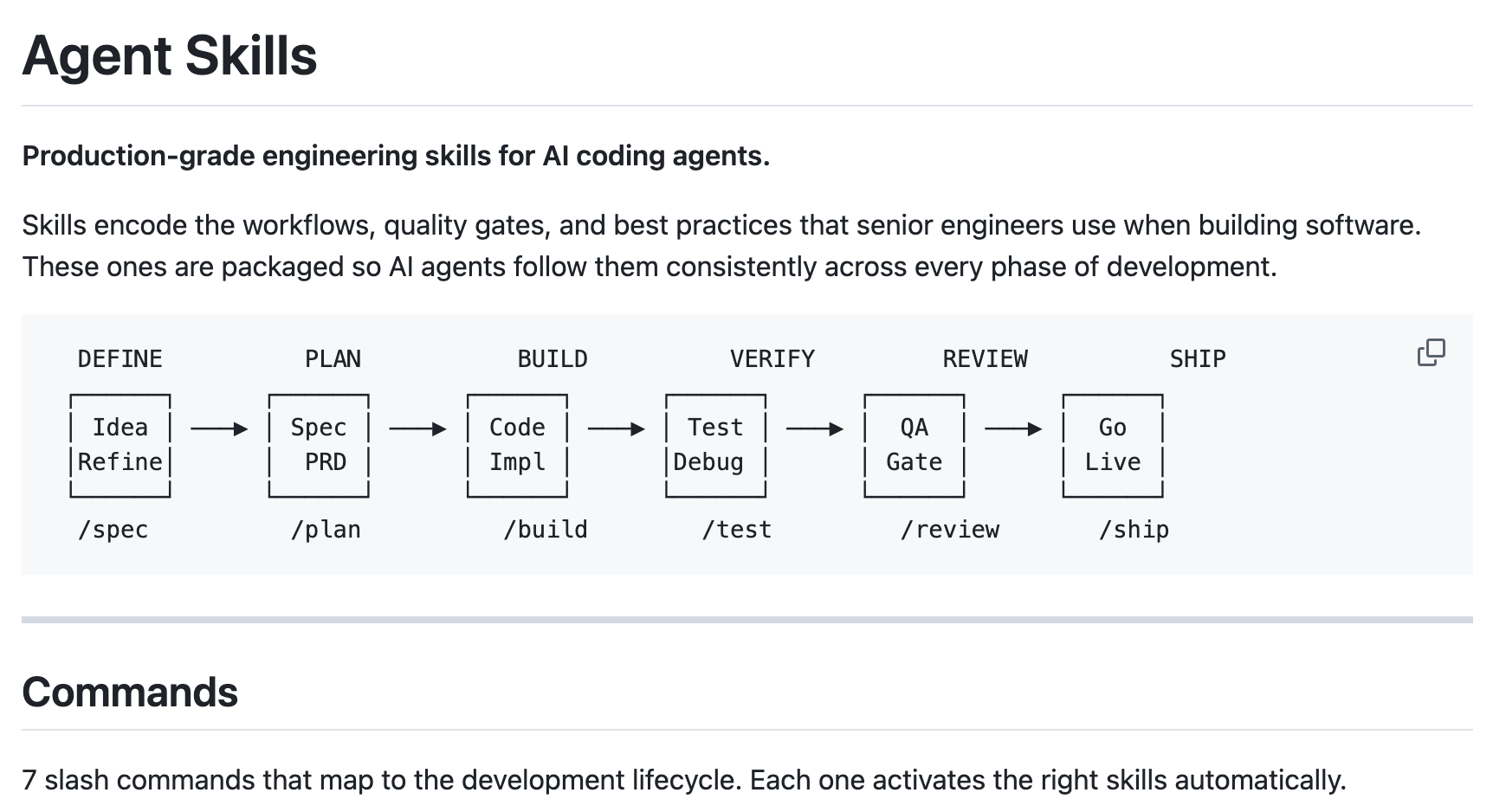

He open-sourced Agent Skills, a collection of 20 structured engineering workflows as Skills and 7 slash commands that cover the full software development lifecycle, from writing specs to shipping code. Each skill is a step-by-step process with verification gates, defined inputs/outputs, and anti-rationalization tables that prevent your agent from taking shortcuts like skipping tests or jumping straight to code.

The skills map to six development phases (Define → Plan → Build → Verify → Review → Ship), and they bake in real engineering principles like Hyrum's Law, Chesterton's Fence, and trunk-based development.

The repo has already crossed 14K stars already in just a couple of days already!

Key Highlights:

Workflows, Not Prompts - Each of the 20 skills has defined inputs, outputs, and verification gates. The agent can't skip to the next step without completing the current one.

7 Slash Commands -

/spec,/plan,/build,/test,/review,/code-simplify, and/shipmap to the full development lifecycle, making it easy to invoke the right process at the right time.Three Specialist Personas - Ships with code-reviewer, test-engineer, and security-auditor agent personas you can load for targeted review workflows.

You need to check it out now!

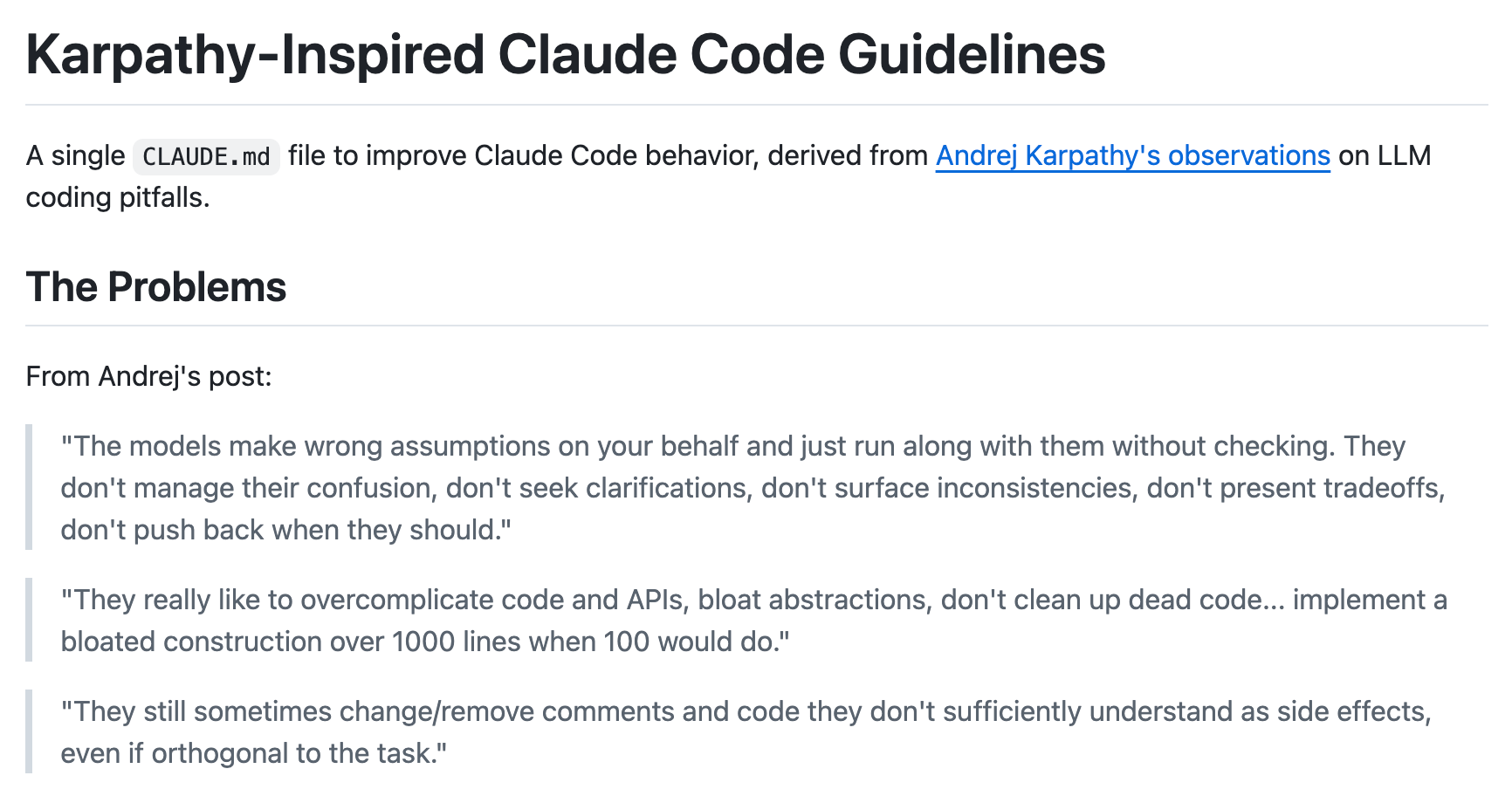

Andrej Karpathy diagnosed exactly how LLMs fail at coding. And now there's a one-file fix you can install in 10 seconds.

andrej-karpathy-skills is an open-source CLAUDE.md file by developer Forrest Chang that encodes four behavioral principles directly into Claude Code, all derived from Karpathy's observations with building using AI coding agents.

Back in January, Karpathy documented how he shifted from mostly manual coding to 80% agent coding in a matter of weeks. But he was equally blunt about the failure modes: agents making wrong assumptions without checking, over-complicating everything, and quietly messing with unrelated code as side effects.

This project turns those observations into guardrails. Drop the file in your project root, and Claude Code automatically picks up the constraints for cleaner diffs, fewer rewrites, and agents that pause to ask before assuming.

Key Highlights:

Assumptions get surfaced, not buried - The file forces the agent to explicitly state what it's assuming and ask for clarification when multiple interpretations exist, instead of silently picking one.

Simplicity is enforced, not suggested - If 200 lines could be 50, rewrite. No speculative flexibility, no abstractions for single-use code, no features nobody asked for.

Surgical precision on edits - The agent only modifies what's directly relevant to the task — no touching adjacent comments, no reformatting, no surprise refactors.

Success criteria over step-by-step hand-holding - Inspired by Karpathy's observation that LLMs are best when looping toward verifiable goals: write the test first, then make it pass.

Quick Bites

OpenClaw’s latest update might make your Claws better with GPT-5.4

A huge release dropped by the OpenClaw team today with a formal GPT-5.4 vs Opus 4.6 agentic parity gate baked into the release pipeline. It’s a clear signal that the project is treating GPT-5.4 as a first-class citizen post-Anthropic lockout. The update also ships ChatGPT memory import ingestion, richer video generation modes, and a pile of fixes for the OAuth/transport bugs that plagued early 5.4 adopters. If you rage-quit Claude after the April 4 subscription crackdown and found GPT-5.4 underwhelming, this is the release worth retesting with retuned bootstrap files.

Get 100x from your AI Agents with Thin Harness and Fat Skills

If you've been thinking about how to structure AI agents, Garry Tan's "thin harness, fat skills" essay is a really satisfying read. The gist: stop cramming logic into bloated orchestration layers with 40 MCP tools. Instead, put judgment into rich markdown skill files and push execution into fast, deterministic tooling underneath. He walks through a real system at YC where matching skills self-improve by analyzing mediocre event feedback, which is exactly the kind of compounding loop that makes you go "oh, right, that's how this should work." Do give it a read.

Own your memory for your agent harness

The thing that makes your AI agent actually good over time isn't the model, it's all the context it's built up about you, your work, and your preferences. Harrison Chase connects this nicely to why owning your agent harness matters: when you use a closed agent harness, you're giving away your agent's memory. And memory is really just context! Model providers know this, which is why they're quietly pulling more state behind their APIs. His story about accidentally losing his email agent's memory and having to reteach it everything from scratch is a perfect example of why this matters. Another interesting read coming on X!

Leaked screenshots from Anthropic show Full-Stack App Builder

Another (rumoured) leak from Anthropic showing a Lovable-like interface where you can describe apps in natural language, pick templates for chatbots, games, or photo albums, and it spins up full-stack apps. Powered by Claude Opus 4.6, it offers live previews, one-click publishing, and more. Not sure how true this is, but we won’t be surprised!

Tools of the Trade

Multica: Turns coding agents into real teammates. Assign issues to an agent like you'd assign to a colleague, and they'll pick up the work, write code, report blockers, and update statuses autonomously. Your agents show up on the board, participate in conversations, and compound reusable skills over time. Works with Claude Code, Codex, OpenClaw, and OpenCode.

RCLI: A fully on-device voice AI for macOS that runs a complete STT → LLM → TTS pipeline natively on Apple Silicon, no API keys needed. It can control 38 macOS actions by voice (Spotify, volume, screenshots, etc.), do local RAG over your documents with ~4ms retrieval, and claims up to 550 tok/s on M3+.

just-bash: A sandboxed bash implementation in TypeScript from Vercel, designed to be the execution environment AI agents use when they need to run shell commands. It provides an in-memory filesystem, broad Unix command coverage, and configurable execution limits to prevent runaway scripts.

Awesome LLM Apps - A curated collection of LLM apps with RAG, AI Agents, multi-agent teams, MCP, voice agents, and more. The apps use models from OpenAI, Anthropic, Google, and open-source models like DeepSeek, Qwen, and Llama that you can run locally on your computer.

(Now accepting GitHub sponsorships)

Hot Takes

vibecoding is fentanyl for people with an iq over 120

OpenAI is starting to look like Google in 2002 & Anthropic is starting to look like Yahoo in 2002.

~ kache

That’s all for today! See you tomorrow with more such AI-filled content.

Don’t forget to share this newsletter on your social channels and tag Unwind AI to support us!

PS: We curate this AI newsletter every day for FREE, your support is what keeps us going. If you find value in what you read, share it with at least one, two (or 20) of your friends 😉

Reply