- unwind ai

- Posts

- Karpathy’s Autoresearch for Agent Engineering

Karpathy’s Autoresearch for Agent Engineering

+ Google Gemma 4 with Skills on-device

Today’s top AI Highlights:

& so much more!

Read time: 3 mins

AI Tutorial

Six AI agents run my entire life while I sleep.

Not a demo. Not a weekend project.

A real team that works 24/7, making sure I'm never behind. Research done. Content drafted. Code reviewed. Newsletter ready. By the time I open Telegram in the morning, they've already put in a full shift.

By the end of this, you will understand exactly how to build an autonomous AI agent team that runs while you sleep.

We share hands-on tutorials like this every week, designed to help you stay ahead in the world of AI. If you're serious about leveling up your AI skills and staying ahead of the curve, subscribe now and be the first to access our latest tutorials.

Latest Developments

Karpathy's autoresearch let an AI agent run 100 ML experiments overnight on a single GPU. Now someone's applied the same idea to agent engineering itself.

Kevin Gu just open-sourced AutoAgent, a library where a meta-agent autonomously improves a task agent's entire harness - prompts, tools, orchestration logic - by running thousands of parallel sandboxed experiments.

Point it at a task domain with evals, walk away for 24 hours, and come back to an agent that built its own domain-specific tooling and verification loops from scratch.

AutoAgent hit #1 on both SpreadsheetBench (96.5%) and TerminalBench (55.1%), beating every hand-engineered entry on both leaderboards.

The sharpest finding: same-model pairings (Claude meta + Claude task) crush cross-model setups because the meta-agent implicitly understands how the inner model reasons. The team calls this "model empathy."

Key Highlights:

Autoresearch for Agents: Where Karpathy's loop edits training code and hill-climbs on validation loss, AutoAgent edits an agent's harness and hill-climbs on benchmark scores.

Emergent Behaviors: Without being programmed to, the agent invented spot-checking for faster iteration, built its own verification loops, wrote per-task unit tests, and spun up sub-agents for complex domains.

Traces Over Scores: Feeding only benchmark scores barely moved the needle. Sharing full reasoning trajectories with the meta-agent is what enabled targeted, meaningful harness edits.

Potential: Every domain needs a different agent harness, and no team can hand-tune hundreds of them. AutoAgent is designed to continuously spin up and optimize task-specific agents across an organization.

ICYMI: Anthropic's Claude Code lead, Boris Cherny, announced that Claude subscriptions no longer cover third-party tools like OpenClaw, effective April 4 at 12 pm PT. If you were running your agent workflows on a Pro or Team subscription, that access is now gone, and you’ll need an Anthropic API key with pay-as-you-go billing.

Don’t fret, though. We audited our OpenClaw agents and workflows during the weekend, and these are our findings - we will keep using Claude Opus 4.6 for orchestrating the agents, and each agent could use GPT-5.4 (or GPT-5.3-codex for coding workflows). Peter and team have made several personality upgrades to the model so it feels less corporate, more alive, and still “professionally unwilling to do dumb shit.”

Another good option would be Kimi K2.5 and MiniMax M2.1!

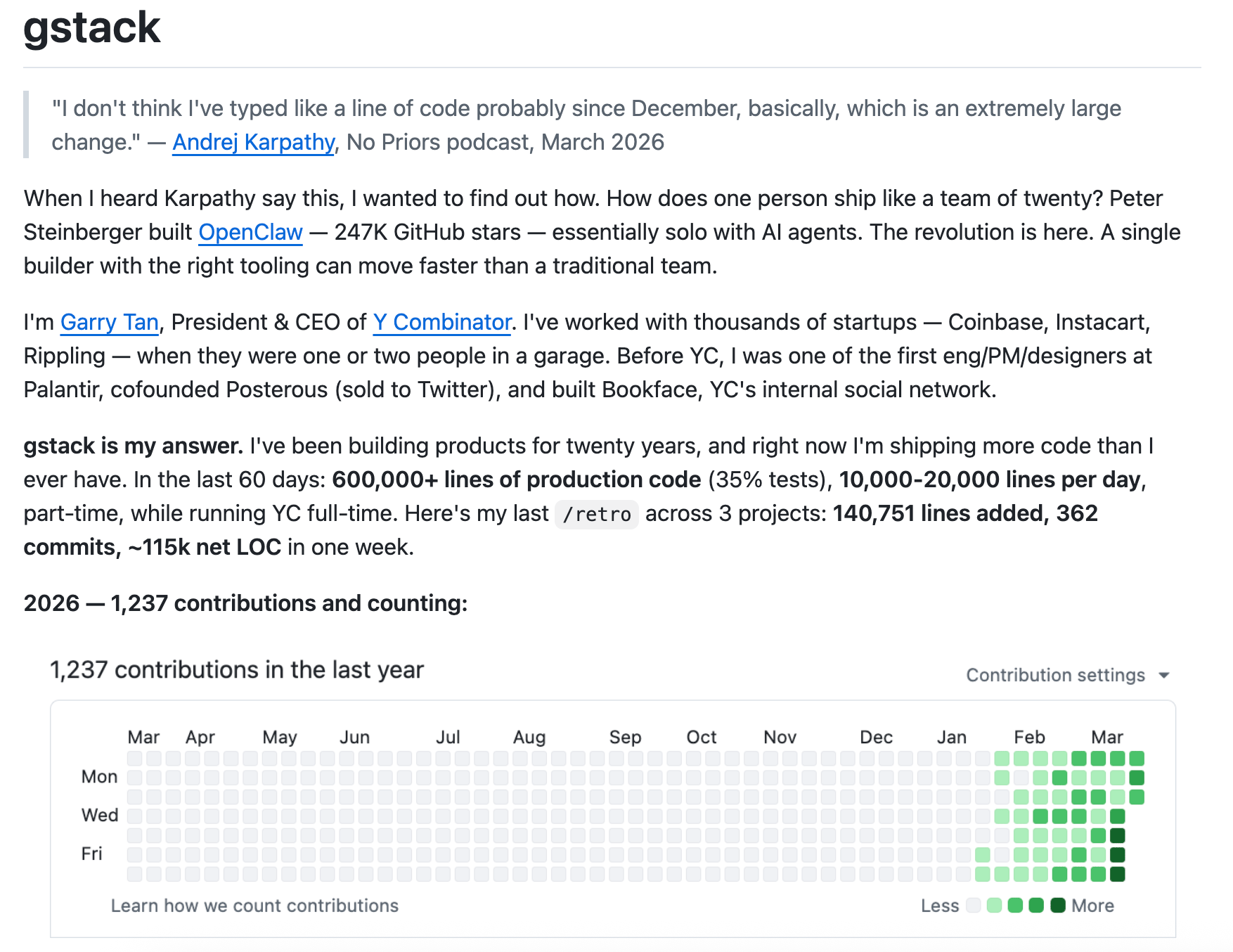

The head of Y Combinator is averaging 10,000–20,000 lines of code per day, and he just open-sourced the exact setup that makes it possible.

gstack by Garry Tan is a collection of 20+ opinionated slash commands for Claude Code that assign the AI distinct engineering roles, from a CEO who challenges your product framing to a QA lead that opens a real browser, clicks through your app, finds bugs, and writes regression tests.

It's not a new framework; it's a workflow layer that mirrors how a well-run startup actually operates: think, plan, build, review, test, ship.

The repo hit 54K+ stars in weeks, sparked a viral debate about AI-assisted development at SXSW, and is one of the fastest-growing repos of 2026.

Key Highlights:

Role-Based Workflow: Over 20 slash commands split the dev lifecycle into distinct specialist modes (planning, review, design, QA, security, shipping), preventing the "one agent doing everything at once" failure mode.

Persistent Browser Runtime: A long-lived headless Chromium daemon keeps cookies, tabs, and login state across commands, enabling real browser-based QA with ~100ms latency per action after startup.

Second Opinions: The

/codexcommand pulls in an independent review from OpenAI's Codex CLI, then cross-references findings with Claude's/reviewto surface what each model caught or missed.30-Second Install: One paste command into Claude Code sets up the entire skill pack, and it also works with Codex, Gemini CLI, and Cursor via SKILL.md.

Quick Bites

Gemma 4's ecosystem exploded overnight

Google published an "Agentic Skills on the Edge" guide: multi-step planning, autonomous actions, and code gen all running on-device. Unsloth GGUFs fit the E4B model in 6GB RAM. Run ollama run gemma4:26b on your Mac and skip the API bill.

Netflix open-sources VOID, an object removal model

Of all the companies you'd expect to release an open-source video AI model, Netflix probably wasn't on the list. But here we are! They just dropped VOID, a video editing model that goes beyond simple object removal. It actually re-simulates how the rest of the scene should behave once something's been erased. Remove a person diving into a pool, and the water stays undisturbed; remove a car from a crash, and the surviving vehicle keeps driving naturally. Outperforms Runway and other baselines by a wide margin in user preference tests. Now available on Hugging Face.

Your OpenClaw agents can now dream

New from OpenClaw: "Dreaming," a background memory consolidation layer to solve for long-term recall in agents. Previously, important context either got explicitly saved to MEMORY.md or slowly decayed in daily note files. Dreaming observes which notes the agent actually retrieves over time, scores recall patterns across six weighted signals, and auto-promotes only the entries that pass every gate into permanent memory. It runs on a configurable sleep-cycle cadence, it's fully explainable, and it's off by default. Your lobster literally sleeps on it.

Mintlify replaced RAG with a virtual filesystem

RAG is the default retrieval pattern for AI apps. Embed your docs, vector search at query time, hope the right chunks surface. Mintlify threw all of it out. They replaced their entire RAG pipeline with a virtual filesystem for AI documentation. Instead of chunking that structure into vectors and losing the relationships, Mintlify exposes it as a filesystem that AI agents can navigate. The agent browses documentation the way a developer would browse a codebase. No sandboxes, no micro-VMs. Combined with the Hermes memory plugin system released recently, the retrieval landscape is fracturing fast. RAG isn't dying, but it's no longer the only pattern.

Tools of the Trade

/ui-test: Agent skill that analyzes a PR diff, generates adversarial test cases across three planning rounds, and fans them out to sub-agents that test your UI in real browsers via the Browserbase CLI. It outputs a standalone HTML report with embedded failure screenshots and suggested fixes.

Apfel: An open-source CLI tool that wraps Apple's on-device ~3B parameter LLM (shipping with macOS Tahoe) and exposes it as a UNIX pipe-friendly command, an OpenAI-compatible local server, and an interactive chat - all via a single

brew install. Supports MCP, JSON output, file attachments, and context trimming for the 4,096-token window.Career-Ops: An open-source Claude Code skill that turns your terminal into a full job search pipeline. It scans 45+ company career pages, scores offers on a weighted A-F system, generates per-job ATS-optimized CVs, and tracks your entire pipeline. The author used it to process 740+ listings and land a Head of Applied AI role.

Awesome LLM Apps - A curated collection of LLM apps with RAG, AI Agents, multi-agent teams, MCP, voice agents, and more. The apps use models from OpenAI, Anthropic, Google, and open-source models like DeepSeek, Qwen, and Llama that you can run locally on your computer.

(Now accepting GitHub sponsorships)

Hot Takes

I'm calling it. AGI is already here – it's just not evenly distributed yet.

Before:

move to SF. have an idea. look for a tech cofounder. build a deck in the meantime. Months pass...

After:

codex/claude in one window, X in the other. Build demo. Make a video of the demo. Announce it online, make it go viral. Cofounders/customers/investors come to you...

That’s all for today! See you tomorrow with more such AI-filled content.

Don’t forget to share this newsletter on your social channels and tag Unwind AI to support us!

PS: We curate this AI newsletter every day for FREE, your support is what keeps us going. If you find value in what you read, share it with at least one, two (or 20) of your friends 😉

Reply