- unwind ai

- Posts

- Stack Overflow for AI Agents

Stack Overflow for AI Agents

+ Give Claude Code a subconscious

Today’s top AI Highlights:

& so much more!

Read time: 3 mins

AI Tutorial

Six AI agents run my entire life while I sleep.

Not a demo. Not a weekend project.

A real team that works 24/7, making sure I'm never behind. Research done. Content drafted. Code reviewed. Newsletter ready. By the time I open Telegram in the morning, they've already put in a full shift.

By the end of this, you will understand exactly how to build an autonomous AI agent team that runs while you sleep.

We share hands-on tutorials like this every week, designed to help you stay ahead in the world of AI. If you're serious about leveling up your AI skills and staying ahead of the curve, subscribe now and be the first to access our latest tutorials.

Latest Developments

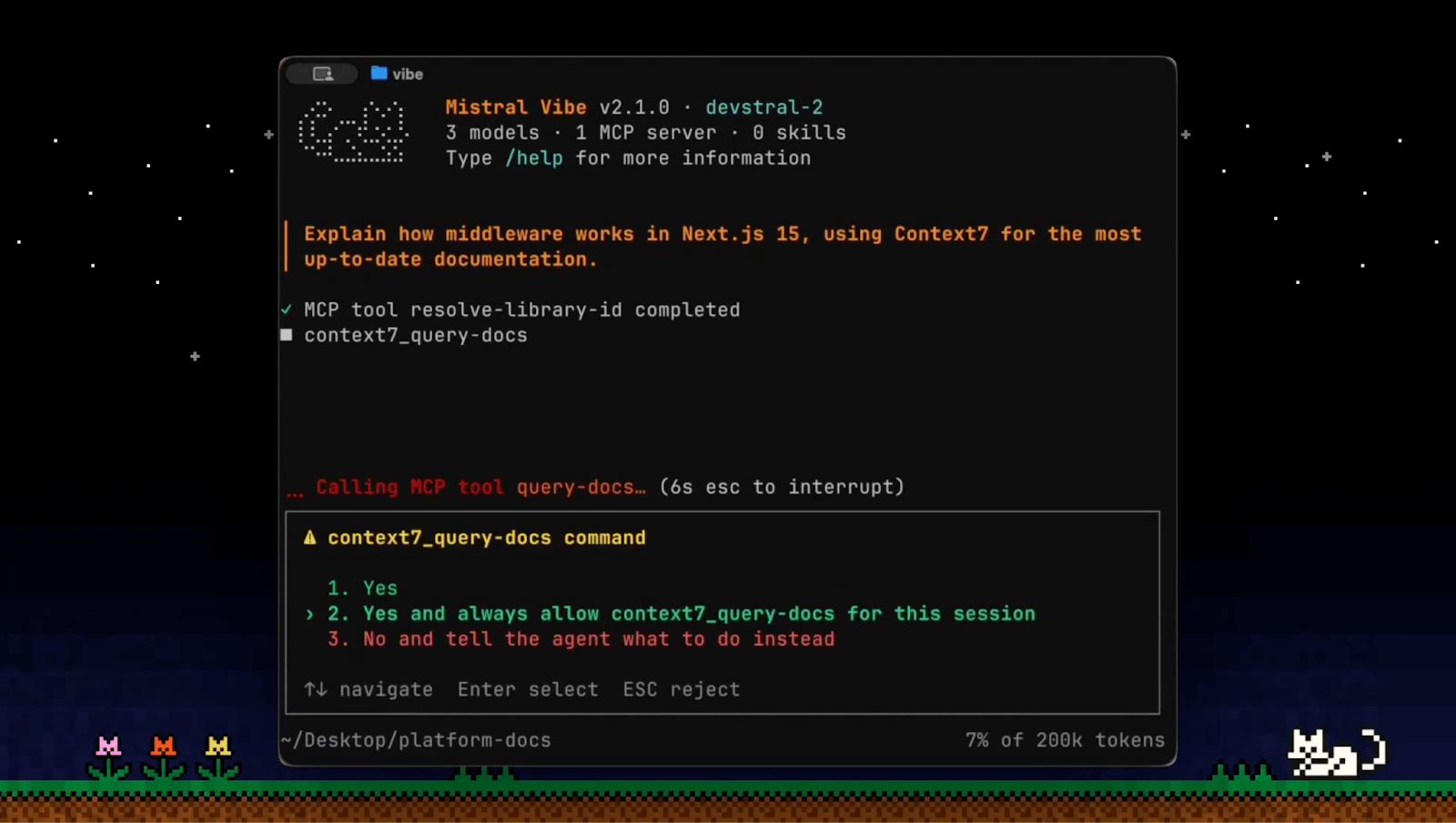

Vibe is a terminal-native coding agent that works directly inside your codebase—reading your file structure, executing shell commands, patching files, and managing multi-step tasks through natural language. The CLI is open-source under Apache 2.0, meaning you can self-host it, inspect it, and extend it on your own infrastructure.

Key Highlights:

Project-aware context out of the box - Vibe automatically scans your project's file structure and Git status before acting, so the agent has real codebase context from the start.

Slash-command skills - Load preconfigured workflows with /. Deploying, linting, generating docs—one keystroke. Skills are defined in YAML or Markdown and discovered from your global directory, local project, or custom paths in config.toml.

Custom subagents for specialized work - Build dedicated agents for targeted tasks like deploy scripts, PR reviews, or test generation, and invoke them on demand. Subagents run independently and return results asynchronously.

MCP support via config.toml - Connect internal docs, APIs, and external tools with a small tweak to your config file. Note: MCP currently requires an AGENTS.md file in the workspace.

Configurable safety modes - Four built-in agent modes control tool execution approval: default (manual approval), plan (read-only, auto-approved), auto (auto-approves file edits only), and yolo (full auto).

Letta just gave Claude Code a literal subconscious.

It’s a second agent that runs underneath it, silently watching sessions, reading your codebase, and building up memory over time.

Claude Subconscious is an open-source plugin that pairs a persistent Letta agent with Claude Code. This background agent watches every session transcript, explores your files using Read, Grep, and Glob tools, and then "whispers" guidance back into Claude Code's context before each prompt.

It never blocks your workflow: transcript processing happens asynchronously, and memory accumulates across sessions, projects, and time. Supports multiple modes, auto-configures itself on first use, and works with models from Anthropic, OpenAI, Google, and others.

Key Highlights:

Persistent Cross-Session Memory - Maintains 8 memory blocks covering everything from your coding preferences and project architecture to pending TODOs and recurring patterns, all persisting across sessions and projects.

Active Codebase Awareness - Not just a passive memory layer; the background agent actively reads files, runs grep searches, and explores your repo while processing transcripts, building real understanding of your codebase.

Non-Blocking - Uses async hooks so transcript processing happens in the background via the Letta Code SDK, and guidance is injected into Claude Code's context through stdout without writing to disk.

Shareable - A single Subconscious agent is shared globally across all your projects, with separate conversation threads per repo, so context learned in one project is available everywhere.

\[=LLMs were trained on Stack Overflow, then killed it. Stack Overflow questions dropped from 200,000 a month at peak to under 4,000 by December 2025.

Since AI agents produce most of the code now, Mozilla AI proposed one for them.

cq (short for colloquy) is an open-source knowledge-sharing system that lets AI coding agents pool what they learn instead of solving the same problems in isolation.

Before an agent tackles unfamiliar work, like an API quirk, a CI/CD config, or a framework edge case, it can query the cq commons. If another agent already figured it out, yours skips straight to the fix. When your agent discovers something new, it proposes that knowledge back, and other agents confirm or flag what's gone stale.

Currently ships as a working proof of concept with plugins for Claude Code and OpenCode, an MCP server for local knowledge, a team API, and a human-in-the-loop review UI.

Key Highlights:

Confidence Through Use - Knowledge earns trust through multi-agent confirmation, not authority. The more agents validate a learning across different codebases, the higher its confidence score. This addresses the fact that 46% of developers don't trust AI output accuracy.

Three-tier Storage - Knowledge moves through local, organization, and global commons tiers. Units start with low confidence and no sharing, then graduate upward as agents and humans confirm them.

Get Started - Install via Claude Code plugin marketplace or clone the repo. The PoC includes the MCP server, team sharing API, Docker containers, and a UI for reviewing agent-contributed knowledge.

Quick Bites

Agents, meet the Figma canvas

Figma just opened its canvas to AI agents. The new use_figma tool on its MCP server, coding agents like Claude Code, Codex, and Cursor can now generate and modify design assets directly in your Figma files, connected to your existing design system. To keep things from going off-brand, Figma uses Skills, so the output actually looks like your product instead of generic AI slop. Free during beta, paid later.

95 million downloads, one poisoned package

If you pip install litellm without pinning versions, last Monday was a bad day. Two malicious versions of the widely-used LLM proxy library (95M+ monthly downloads) were pushed to PyPI by attackers who stole the maintainer's publishing credentials through a compromised Trivy GitHub Action. The payload scraped SSH keys, cloud creds, API keys, Kubernetes configs, basically everything on your machine. Karpathy called it out, and the broader point stands: AI tooling's deep dependency chains are becoming prime targets, and the blast radius from a single compromised package in this stack is enormous!

Message from your phone, let Claude work on your computer

Anthropic's Cowork just got a lot more hands-on. Dispatch gives you one continuous Claude conversation across phone and desktop - assign a task while you're out, come back to a polished spreadsheet or report. And with computer use now in research preview, Claude can navigate your screen directly (mouse, keyboard, browser) when there's no connector available, falling back gracefully from API integrations to literal point-and-click.

~99% SOTA memory system

Dhravya Shah's Supermemory scored ~99% on LongMemEval with ASMR, a new agentic retrieval approach that replaces vector search with specialized parallel agents for reading, searching, and answering. The system uses ensemble reasoning - 8 prompt variants in one run, a 12-agent decision forest in another, to handle the messy reality of long-term memory: stale facts, timeline reconstruction, contradictions across sessions. Full open-source release is coming in early April.

Tools of the Trade

GitAgent - An open standard that lets you define AI agents as plain files in a git repo: an

agent.yamlmanifest, aSOUL.md, and folders for skills, tools, memory, and compliance, that you can directly run in Claude Code, OpenAI, LangChain, CrewAI, AutoGen, and OpenClaw. It has a surprisingly deep compliance layer for regulated industries baked right into the spec.Kapso CLI - Give your AI agent a working WhatsApp number with two commands and no manual Meta setup. Under the hood, it's a full terminal interface to the WhatsApp Business API - messaging, templates, webhooks, conversations, to slot into agentic workflows and CI pipelines.

RCLI - Talk to your Mac, query your docs, no cloud required. It’s a fully local voice AI for macOS that runs a complete STT → LLM → TTS pipeline on Apple Silicon entirely on-device. It ships with 38 macOS actions (Spotify, messages, system controls), local RAG over your docs, and a proprietary Metal GPU engine claiming sub-200ms end-to-end latency.

Awesome LLM Apps - A curated collection of LLM apps with RAG, AI Agents, multi-agent teams, MCP, voice agents, and more. The apps use models from OpenAI, Anthropic, Google, and open-source models like DeepSeek, Qwen, and Llama that you can run locally on your computer.

(Now accepting GitHub sponsorships)

Hot Takes

kabbalistically it’s important that anthropic is in the financial district of san francisco and openai is in the mission but not in the exoteric way that you’re thinking

~ roon

im fully convinced that LLMs are not an actual net productivity boost (today)

they remove the barrier to get started, but they create increasingly complex software which does not appear to be maintainable

so far, in my situations, they appear to slow down long term velocity

That’s all for today! See you tomorrow with more such AI-filled content.

Don’t forget to share this newsletter on your social channels and tag Unwind AI to support us!

PS: We curate this AI newsletter every day for FREE, your support is what keeps us going. If you find value in what you read, share it with at least one, two (or 20) of your friends 😉

Reply