- unwind ai

- Posts

- Turn Claude Code into a Senior Engineer

Turn Claude Code into a Senior Engineer

+ Google open-sources Workspace CLI, OpenAI GPT-5.4

Today’s top AI Highlights:

& so much more!

Read time: 3 mins

AI Tutorial

Six AI agents run my entire life while I sleep.

Not a demo. Not a weekend project.

A real team that works 24/7, making sure I'm never behind. Research done. Content drafted. Code reviewed. Newsletter ready. By the time I open Telegram in the morning, they've already put in a full shift.

By the end of this, you will understand exactly how to build an autonomous AI agent team that runs while you sleep.

We share hands-on tutorials like this every week, designed to help you stay ahead in the world of AI. If you're serious about leveling up your AI skills and staying ahead of the curve, subscribe now and be the first to access our latest tutorials.

Latest Developments

Feel like Claude Code misses the most obvious things even after giving it all the context? That’s probably because your CLAUDE.md file is doing too much!

The real trick isn't writing a better prompt; it's giving your repo a structure that Claude can actually reason about.

This guy put together a modular project template that organizes AI context the way you'd organize code, with separation of concerns, clear boundaries, and progressive disclosure.

Break your project context into layered components: a lean CLAUDE.md as the "north star," reusable skills for repeated workflows like code review and refactoring, hooks for deterministic guardrails, and local CLAUDE.md files placed near risky modules so the model picks up gotchas exactly when it needs them.

When your repo is organized this way, Claude Code can navigate your project with real spatial awareness instead of relying on a bloated prompt to figure things out.

Key Highlights:

Lean CLAUDE.md - The root file covers just three things: why the project exists, where things live, and how work gets done. Anything more, and Claude starts losing focus.

Reusable skills - Workflows like code review, refactoring, and release procedures are stored as skill files, so you're not rewriting instructions every session.

Deterministic hooks - Hooks enforce things models shouldn't be trusted to remember, like running formatters, triggering tests, and blocking edits to sensitive directories.

Context where it matters - Local CLAUDE.md files inside modules like

src/auth/andsrc/persistence/give Claude module-specific knowledge exactly when it enters that part of the codebase.

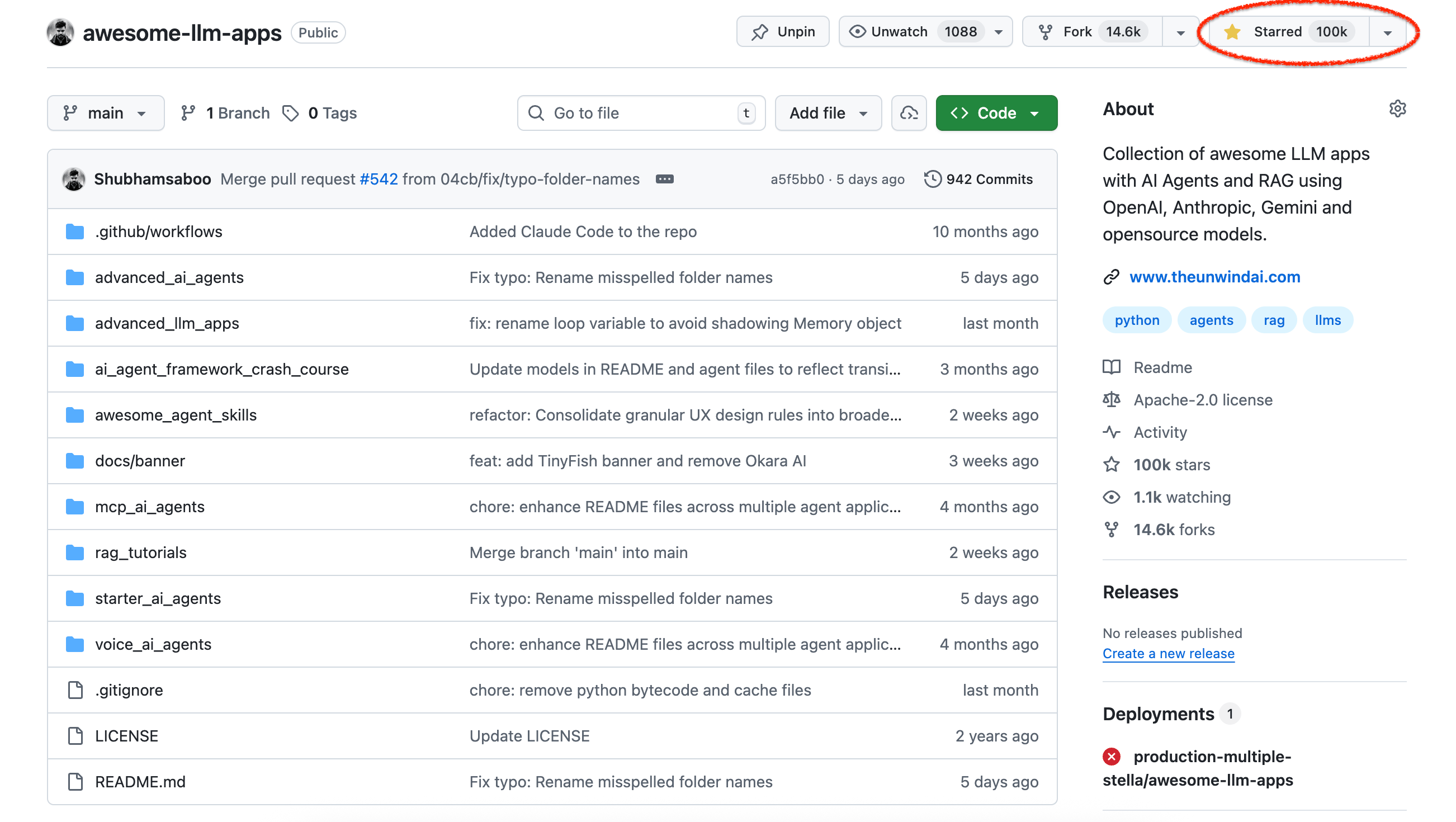

This is wild! Awesome LLM Apps is now among the top 100 most-starred repositories of all time, right up there with projects that practically run the internet.

The repo is a fully open-source, Apache 2.0-licensed collection of hands-on LLM applications - AI agents, multi-agent teams, RAG pipelines, voice agents, MCP tools, and more, each with step-by-step instructions you can clone and run immediately.

100% free, always. You get:

AI agents end-to-end - Starter agents for getting up and running fast, plus advanced builds like browser agents, voice agents, and MCP agents.

Multi-agent teams - Complete implementations of multi-agent orchestration patterns, from simple handoffs to swarm-style coordination.

RAG done right - Covers the full spectrum: basic RAG, agentic RAG, hybrid search, knowledge graph RAG with citations, vision RAG, and dataset routing.

Run anything locally - Every project supports OpenAI, Anthropic, Gemini, xAI, and open-source models like Llama and Qwen, so you can prototype on your laptop and scale to cloud without rewriting code.

Check it out now!

You’ve been drowning in 47 Chrome tabs switching between Gmail, Drive, Docs, and Calendar, or paying $$ for automations SaaS.

But Google open-sourced something that makes AI agents access all of these apps with just one npm install.

This is Google Workspace CLI that gives agents (and obv, humans too) structured access to every Workspace API.

The tool is built in Rust, and does something very interesting: instead of shipping a static list of commands, it reads Google's Discovery Service at runtime and builds its entire command surface dynamically. That means when Google adds a new API endpoint, gws picks it up automatically.

It also ships with 40+ pre-built agent skills, plus a built-in MCP server mode so any MCP-compatible client can call Workspace APIs as tools.

Key Highlights:

Dynamic API Coverage - gws doesn't hardcode commands. It fetches Google's Discovery JSON at runtime and builds its CLI surface dynamically, so new Workspace API methods are available immediately without updates.

Agent-First Design - All output is structured JSON, and the CLI includes 40+ pre-built skills covering Gmail, Drive, Docs, Calendar, Sheets, and Chat, plus role-based bundles for use cases like executive assistant and project coordinator.

40+ Pre-Built Skills - Ships with ready-to-use agent workflows including inbox triage, meeting prep, doc drafting, and role-based bundles for executive assistants, project coordinators, and content creators.

Built-in MCP Server - Run

gws mcp -s drive,gmail,calendarto expose Workspace APIs as structured tools that any MCP-compatible client can call directly.One-Line Setup — Install globally with

npm install -g @googleworkspace/cli, authenticate once withgws auth login, and you're ready to go. No Rust toolchain needed.

Quick Bites

Schedule tasks in the Claude Code desktop app

Claude Code desktop now lets you schedule recurring tasks that run automatically on your machine. Think daily code reviews, dependency checks, or morning briefings. Each run gets its own session with full access to edit files, run commands, and create commits, plus you can enable worktree isolation so scheduled runs don't touch your uncommitted changes. They'll run as long as your computer is awake.

Cursor Automations to build always-on agents

Cursor just shipped Automations, always-on agents that run on schedules or fire off events like Slack messages, Linear issues, merged PRs, or PagerDuty incidents. For eg., security reviews on every push to main, auto-triaging bug reports, or an agent that writes tests for recently merged code each morning. Available now, you can create automations at cursor.com/automations or start from a template.

OpenAI GPT-5.4 for reasoning, coding, and agentic workflows

OpenAI dropped GPT-5.4, their new frontier model that rolls reasoning, coding, and agentic workflows into one package. It's the first general-purpose model from OpenAI with native computer-use capabilities (hitting 75% on OSWorld-Verified, above the human benchmark of 72.4%), supports up to 1M tokens of context in the API, and introduces "tool search" so models can dynamically look up tool definitions instead of stuffing them all into the prompt. Available now across ChatGPT, Codex, and the API, with GPT-5.4 Pro for enterprise-tier users who want maximum performance.

Use your existing enterprise Anthropic budget for third-party tools

Anthropic launched the Claude Marketplace (limited preview), letting enterprises use their existing Anthropic spend commitments to purchase third-party Claude-powered tools, including GitLab, Harvey, Snowflake, Replit, Lovable, and Rogo. It's basically consolidated billing for your AI stack: one invoice, multiple partners, less procurement friction.

Tools of the Trade

Vercel's json-render - Generate dynamic, personalized UIs from prompts without sacrificing reliability. Define a set of UI components, and the AI picks and assembles them based on user prompts. So instead of building every view yourself, you set the building blocks and the model does the layout. It works across MCP Apps clients like Claude, ChatGPT, and VS Code.

claude-replay - Convert Claude Code session transcripts into self-contained, embeddable HTML replays. The generated replay is a single self-contained HTML file with no external dependencies, that you can email, host anywhere, or embed in documentation.

React Grab - Hover over any UI element in your browser and instantly copy its file path, component name, and source code to your clipboard — ready to paste into Claude Code, Cursor, or Copilot. It's open source, works with Next.js and Vite, and gives coding agents precise context instead of making them search your codebase.

Awesome LLM Apps - A curated collection of LLM apps with RAG, AI Agents, multi-agent teams, MCP, voice agents, and more. The apps use models from OpenAI, Anthropic, Google, and open-source models like DeepSeek, Qwen, and Llama that you can run locally on your computer.

(Now accepting GitHub sponsorships)

Hot Takes

in my culture we don't say "I love you". we say "I left a comment on your PR that's a prompt you can just send straight to Claude Code"

~ Thariq

“search” across everything is miscalibrated right now for medium wealthy people because models let you spend more compute for better semantic matching, but that is not happening by default on eg Netflix or Zillow or what have you

~ roon

That’s all for today! See you tomorrow with more such AI-filled content.

Don’t forget to share this newsletter on your social channels and tag Unwind AI to support us!

PS: We curate this AI newsletter every day for FREE, your support is what keeps us going. If you find value in what you read, share it with at least one, two (or 20) of your friends 😉

Reply